Original Link: https://www.anandtech.com/show/13971/the-xeon-entry-cpu-review-e-2174g-e-2134-e-2104g

The Xeon Entry Quad-Core CPU Review: Xeon E-2174G, E-2134, and E-2104G Tested

by Ian Cutress on March 11, 2019 10:30 AM EST- Posted in

- CPUs

- Xeon

- Enterprise CPUs

- Coffee Lake

- Xeon E

- C246

A couple of months ago we reviewed a few of the newest six-core Intel commercial CPUs that are also used in low-end servers. Intel has also launched some quad-core models, which we are focusing on today. These Xeon E quad-core processors compete directly against AMD's Ryzen Pro product line, focusing on manageability, ECC memory support, and guaranteed product longevity.

Xeon E: the New Xeon E3

When Intel moved away from its Xeon E5/E7 naming scheme for its server processors, there was a substantial outcry from IT procurement everywhere. By removing a system that was well known and replacing it with exotic metal names such as Xeon Platinum, Xeon Gold, Xeon Silver, and Xeon Bronze, everyone knew it would mean relearning Intel's product segmentation rules and also teaching it to the executives that would sign off on purchasing rules. The mainstream server market was not the only one to go through a name change - the popular workstation processor models were renamed Xeon W, and the last domino to fall was the Xeon E3-1200 processor line, now named Xeon Entry, or E for short.

| Intel Xeon Naming Strategy | |||||||||

| SNB | IVB | HSW | BDW | SKL | Future | ||||

| Servers | E7-8000 | 'v1' | v2 | v3 | v4 | Xeon SP Platinum Xeon SP Gold Xeon SP Silver Xeon SP Bronze |

|||

| E7-4000 | 'v1' | v2 | v3 | v4 | |||||

| E7-2800 | 'v1' | v2 | - | - | |||||

| E5-4600 | 'v1' | v2 | v3 | v4 | |||||

| E5-2600 | 'v1' | v2 | v3 | v4 | |||||

| E5-2400 | 'v1' | v2 | v3 | - | |||||

| Workstations | E5-1600 | 'v1' | v2 | v3 | v4 | Xeon W | |||

| E5-1400 | 'v1' | v2 | v3 | - | - | - | - | - | |

| SNB | IVB | HSW | BDW | SKL | KBL | CFL | CFL-R | ||

| Mobile | E3-1500M | - | - | - | - | v5 | v6 | E-2100 | ? |

| Consumer | E3-1200 | 'v1' | v2 | v3 | v4 | v5 | v6 | E-2100 | E-2200? |

| Comms | E3-1100 | 'v1' | v2 | - | - | - | - | - | - |

| Network | Xeon-D | - | - | - | D-1500 | D-2100 |

|||

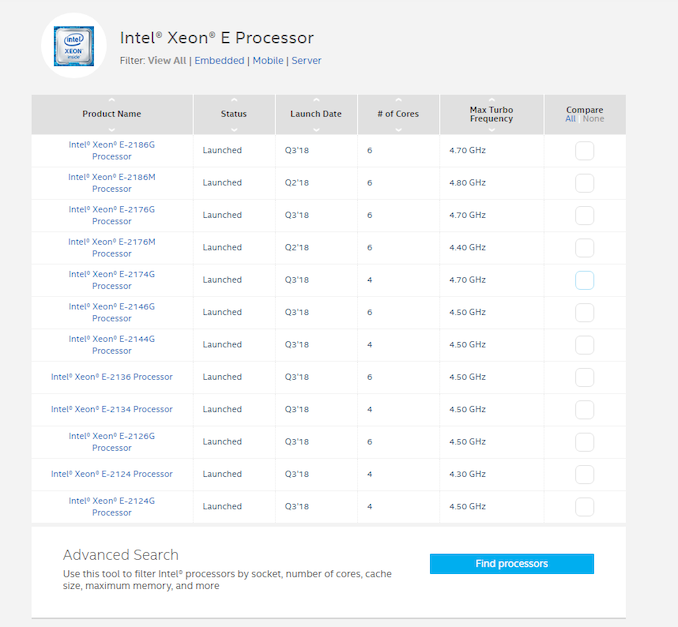

The latest launch, which would have been the Xeon E3-1200 v7, becomes the first generation of Xeon E-2100, bringing it in line with the workstation processors (Xeon W-2100) and the networking/compute crossover family (Xeon D-2100). Intel launched 11 processors, a range of quad-core and six-core parts, using the Coffee Lake microarchitecture.

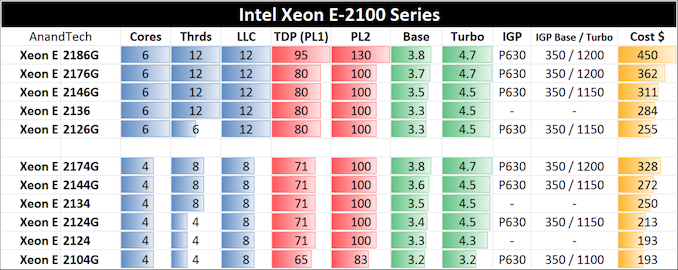

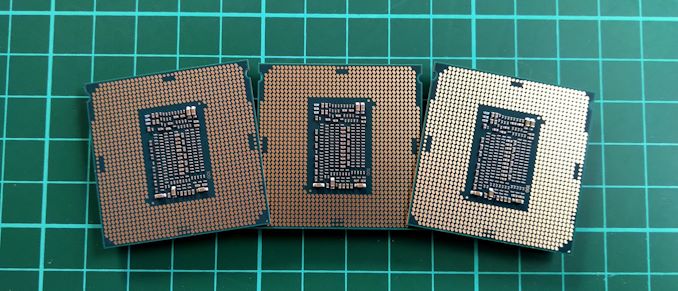

In our previous review, we tackled the six core parts: the E-2186G, the E-2176G, the E-2146G and the E-2136 processors. This time around we are focusing on the quad-core parts we have tested - the E-2174G, the E-2134, and the E-2104G. For these processors, the final digit shows the core count, and the G indicates integrated graphics.

The Xeon E family are derivatives of the consumer processor line, but instead are locked to C236 chipset motherboards but support ECC memory. This means they primarily fit into the commercial market, where businesses will have prebuilt systems with error correcting memory for data safety. These processors also support vPro, enabling management features across a network, and both virtualization options (VT-x and VT-d) that enable the parts for virtualized networks.

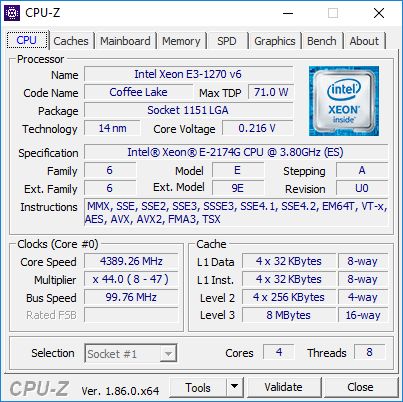

The Xeon E-2174G is the top quad-core processor of this line, with hyperthreading and with a 3.8 GHz base frequency and a 4.7 turbo frequency. As we discovered in our six core review, these processors and the motherboard manufacturers tend to stick to the strict Intel defaults when it comes to power management, unlike the consumer motherboard segment. This means we should expect it to perform similar to consumer processors in single-threaded tests, but in multithreaded expect it to max out at its all-core turbo frequency. At 71W for TDP, we would expect this to also be the sustained load power consumption too.

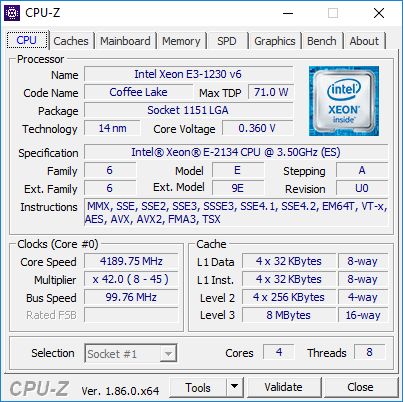

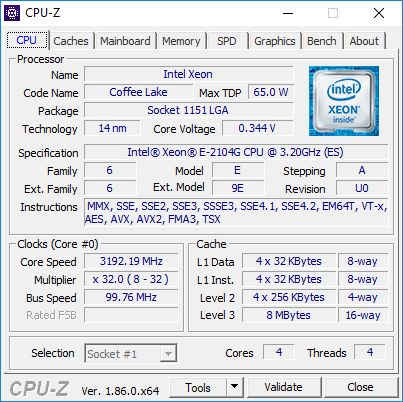

The E-2134 is a lower clocked variant without graphics, however the E-2104G is far more interesting. This processor is an off roadmap part, meaning it isn't sold to retail or distributors, but to specific OEMs that wanted this configuration. In this case, it is a lower TDP quad-core processor (65W rather than 71W) with no turbo frequency - 3.2 GHz all the time when at load. It still has integrated graphics, but has the lowest turbo frequency at 1100 MHz.

Xeon E-2104G doesn't show up on Intel's Database 'ARK' under Xeon E, as it is off-roadmap

Alternatives to Xeon E

From Intel, if you still want a Xeon, then the Xeon Scalable line has some low frequency six core Bronze processors that are at $350. If you don't need ECC or manageability options, then Intel's consumer parts offer extra performance.

From AMD, these Xeon Entry processors compete up against the Ryzen Pro family, which offers similar management options (through DASH), as well as ECC support (depending on the motherboard), long term stability, and a wider range of options from eight core to quad core. The Ryzen Pro parts are identical in specifications to their desktop counterparts. For anyone looking for the Ryzen Pro chips in retail packaging though, you're out of luck - AMD only sells these to OEMs. Unfortunately we haven't been sampled these parts either - we only have a solitary SKU I purchased from China with my own money.

Pages In This Review

- Analysis and Competition

- Test Bed and Setup

- 2018 and 2019 Benchmark Suite: Spectre and Meltdown Hardened

- CPU Performance: System Tests

- CPU Performance: Rendering Tests

- CPU Performance: Office Tests

- CPU Performance: Encoding Tests

- CPU Performance: Web and Legacy Tests

- Gaming: Integrated Graphics

- Gaming: World of Tanks enCore

- Gaming: Final Fantasy XV

- Gaming: Shadow of War

- Gaming: Civilization 6

- Gaming: Ashes Classic

- Gaming: Strange Brigade

- Gaming: Grand Theft Auto V

- Gaming: Far Cry 5

- Gaming: Shadow of the Tomb Raider

- Gaming: F1 2018

- Power Consumption

- Conclusions and Final Words

Test Bed and Setup

As per our processor testing policy, we take a premium category motherboard suitable for the socket, and equip the system with a suitable amount of memory running at the manufacturer's maximum supported frequency. This is also typically run at JEDEC subtimings where possible. It is noted that some users are not keen on this policy, stating that sometimes the maximum supported frequency is quite low, or faster memory is available at a similar price, or that the JEDEC speeds can be prohibitive for performance. While these comments make sense, ultimately very few users apply memory profiles (either XMP or other) as they require interaction with the BIOS, and most users will fall back on JEDEC supported speeds - this includes home users as well as industry who might want to shave off a cent or two from the cost or stay within the margins set by the manufacturer. Where possible, we will extend out testing to include faster memory modules either at the same time as the review or a later date.

| Test Setup | |||||

| Intel Xeon | E-2186G E-2176G E-2146G E-2136 E-2174G E-2134 E-2104G |

Supermicro X11SCA-W |

v1 | TRUE Copper | Corsair Ballistix 4x4GB DDR4-2666 |

| E3-1280 v5 E3-1275 v5 E3-1270 v5 |

GIGABYTE X170-Extreme ECC |

F21e | Silverstone AR10-115XS* |

G.Skill RipjawsV 2x16GB DDR4-2133 |

|

| Intel | i9-9900K i9-9700K i9-9600K |

ASRock Z390 Gaming i7 |

P1.70 | TRUE Copper | Crucial Ballistix 4x4GB DDR4-2666 |

| Intel | i7-8086K i7-8700K i5-8600K i5-8400 |

ASRock Z390 Gaming i7 |

P1.70 | TRUE Copper | Crucial Ballistix 4x4 GB DDR4-2666 |

| AMD | Ryzen 7 2700X Ryzen 5 2600X |

ASRock X370 Gaming K4 |

P4.80 | Wraith Max* | G.Skill SniperX 2x8GB DDR4-2933 |

| GPU | Sapphire RX 460 2GB (CPU Tests) MSI GTX 1080 Gaming 8G (Gaming Tests) |

||||

| PSU | Corsair AX860i Corsair AX1200i |

||||

| SSD | Crucial MX200 1TB | ||||

| OS | Windows 10 x64 RS3 1709 Spectre and Meltdown Patched |

||||

| *VRM Supplimented with SST-FHP141-VF 173 CFM fans | |||||

Many thanks to...

We must thank the following companies for kindly providing hardware for our multiple test beds. Some of this hardware is not in this test bed specifically, but is used in other testing.

Our New Testing Suite for 2018 and 2019

Spectre and Meltdown Hardened

In order to keep up to date with our testing, we have to update our software every so often to stay relevant. In our updates we typically implement the latest operating system, the latest patches, the latest software revisions, the newest graphics drivers, as well as add new tests or remove old ones. As regular readers will know, our CPU testing revolves an automated test suite, and depending on how the newest software works, the suite either needs to change, be updated, have tests removed, or be rewritten completely. Last time we did a full re-write, it took the best part of a month, including regression testing (testing older processors).

One of the key elements of our testing update for 2018 (and 2019) is the fact that our scripts and systems are designed to be hardened for Spectre and Meltdown. This means making sure that all of our BIOSes are updated with the latest microcode, and all the steps are in place with our operating system with updates. In this case we are using Windows 10 x64 Enterprise 1709 with April security updates which enforces Smeltdown (our combined name) mitigations. Uses might ask why we are not running Windows 10 x64 RS4, the latest major update – this is due to some new features which are giving uneven results. Rather than spend a few weeks learning to disable them, we’re going ahead with RS3 which has been widely used.

Our previous benchmark suite was split into several segments depending on how the test is usually perceived. Our new test suite follows similar lines, and we run the tests based on:

- Power

- Memory

- Office

- System

- Render

- Encoding

- Web

- Legacy

- Integrated Gaming

- CPU Gaming

Depending on the focus of the review, the order of these benchmarks might change, or some left out of the main review. All of our data will reside in our benchmark database, Bench, for which there is a new ‘CPU 2019’ section for all of our new tests.

Within each section, we will have the following tests:

Power

Our power tests consist of running a substantial workload for every thread in the system, and then probing the power registers on the chip to find out details such as core power, package power, DRAM power, IO power, and per-core power. This all depends on how much information is given by the manufacturer of the chip: sometimes a lot, sometimes not at all.

We are currently running POV-Ray as our main test for Power, as it seems to hit deep into the system and is very consistent. In order to limit the number of cores for power, we use an affinity mask driven from the command line.

Memory

These tests involve disabling all turbo modes in the system, forcing it to run at base frequency, and them implementing both a memory latency checker (Intel’s Memory Latency Checker works equally well for both platforms) and AIDA64 to probe cache bandwidth.

Office

- Chromium Compile: Windows VC++ Compile of Chrome 56 (same as 2017)

- PCMark10: Primary data will be the overview results – subtest results will be in Bench

- 3DMark Physics: We test every physics sub-test for Bench, and report the major ones (new)

- GeekBench4: By request (new)

- SYSmark 2018: Recently released by BAPCo, currently automating it into our suite (new, when feasible)

System

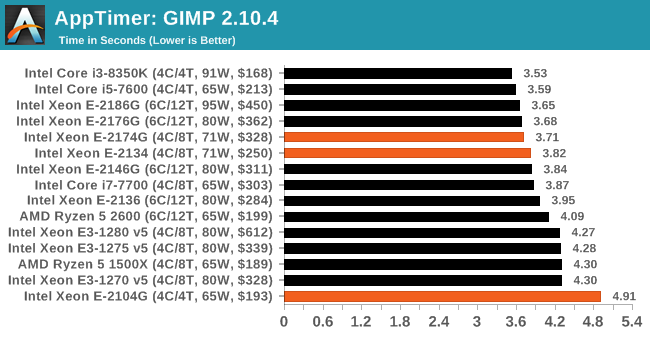

- Application Load: Time to load GIMP 2.10.4 (new)

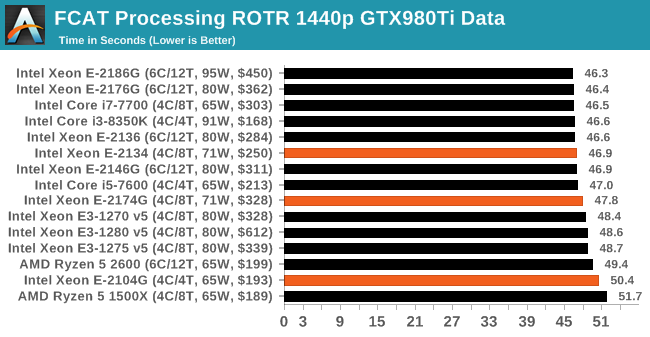

- FCAT: Time to process a 90 second ROTR 1440p recording (same as 2017)

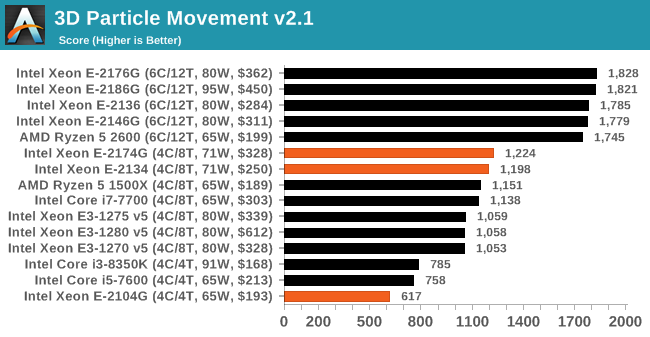

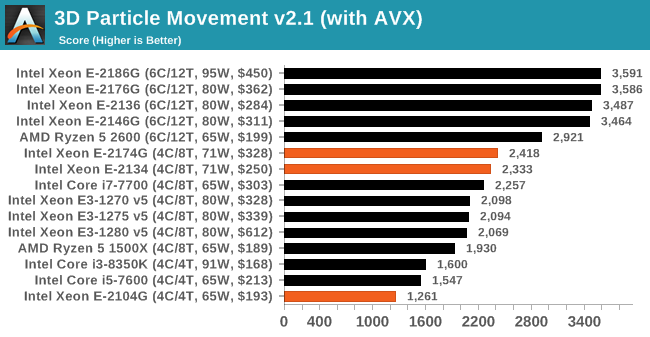

- 3D Particle Movement: Particle distribution test (same as 2017) – we also have AVX2 and AVX512 versions of this, which may be added later

- Dolphin 5.0: Console emulation test (same as 2017)

- DigiCortex: Sea Slug Brain simulation (same as 2017)

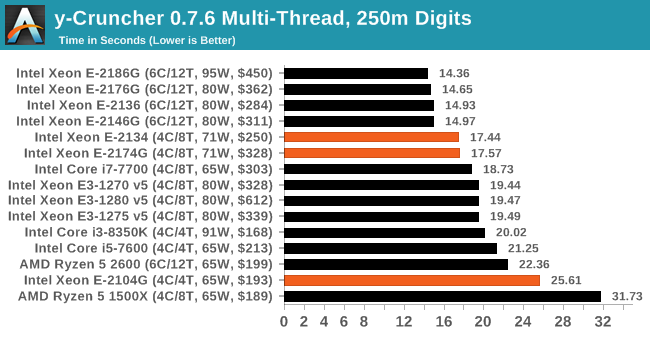

- y-Cruncher v0.7.6: Pi calculation with optimized instruction sets for new CPUs (new)

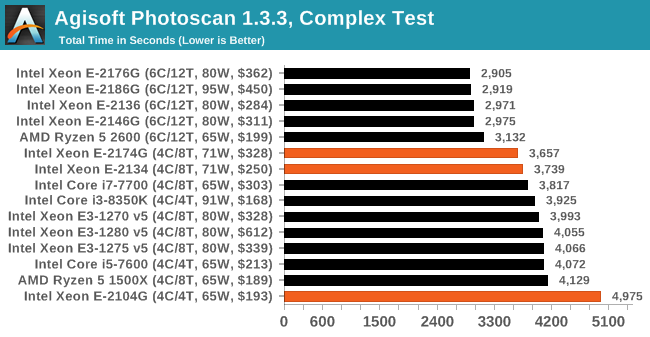

- Agisoft Photoscan 1.3.3: 2D image to 3D modelling tool (updated)

Render

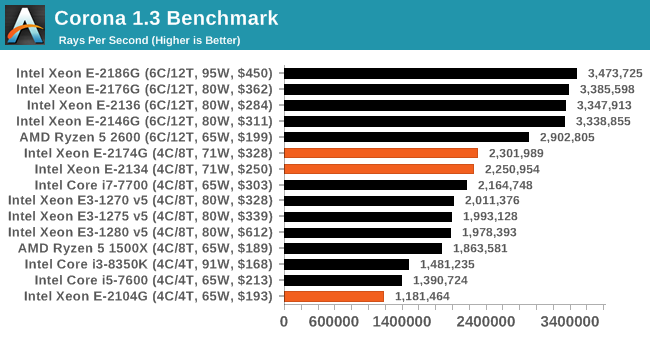

- Corona 1.3: Performance renderer for 3dsMax, Cinema4D (same as 2017)

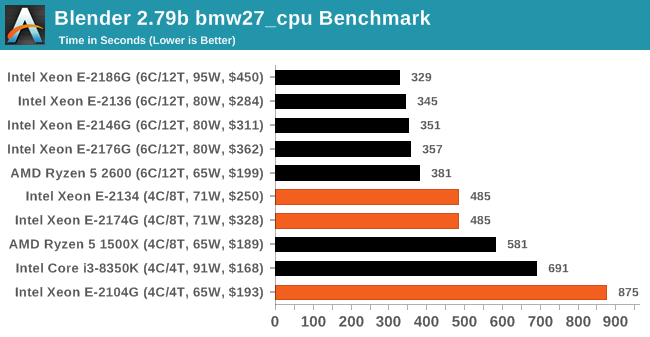

- Blender 2.79b: Render of bmw27 on CPU (updated to 2.79b)

- LuxMark v3.1 C++ and OpenCL: Test of different rendering code paths (same as 2017)

- POV-Ray 3.7.1: Built-in benchmark (updated)

- CineBench R15: Older Cinema4D test, will likely remain in Bench (same as 2017)

Encoding

- 7-zip 1805: Built-in benchmark (updated to v1805)

- WinRAR 5.60b3: Compression test of directory with video and web files (updated to 5.60b3)

- AES Encryption: In-memory AES performance. Slightly older test. (same as 2017)

- Handbrake 1.1.0: Logitech C920 1080p60 input file, transcoded into three formats for streaming/storage:

- 720p60, x264, 6000 kbps CBR, Fast, High Profile

- 1080p60, x264, 3500 kbps CBR, Faster, Main Profile

- 1080p60, HEVC, 3500 kbps VBR, Fast, 2-Pass Main Profile

Web

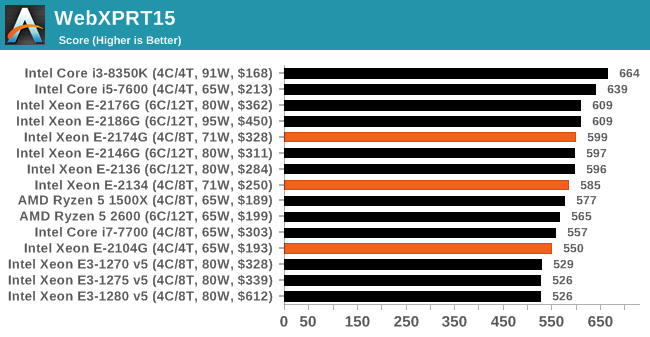

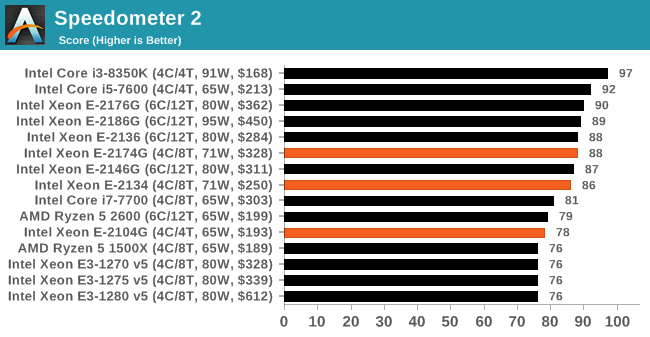

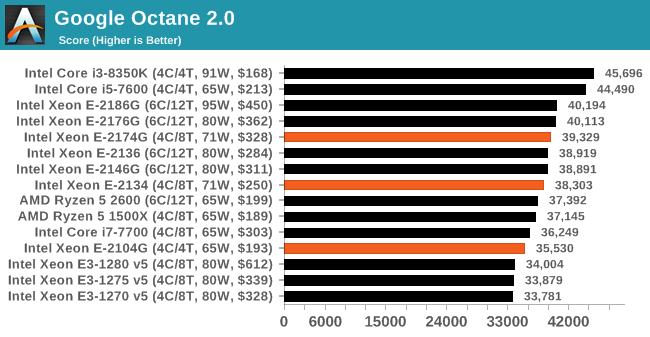

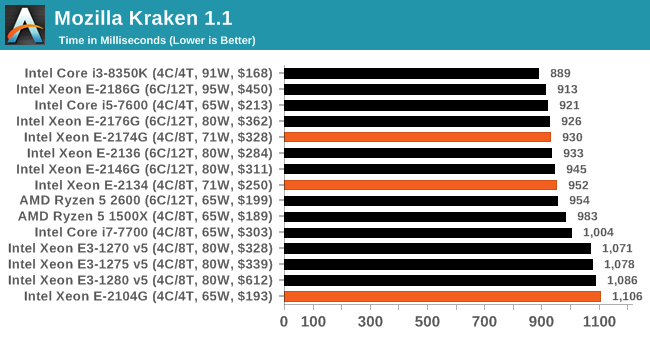

- WebXPRT3: The latest WebXPRT test (updated)

- WebXPRT15: Similar to 3, but slightly older. (same as 2017)

- Speedometer2: Javascript Framework test (new)

- Google Octane 2.0: Depreciated but popular web test (same as 2017)

- Mozilla Kraken 1.1: Depreciated but popular web test (same as 2017)

Legacy (same as 2017)

- 3DPM v1: Older version of 3DPM, very naïve code

- x264 HD 3.0: Older transcode benchmark

- Cinebench R11.5 and R10: Representative of different coding methodologies

Linux (when feasible)

When in full swing, we wish to return to running LinuxBench 1.0. This was in our 2016 test, but was ditched in 2017 as it added an extra complication layer to our automation. By popular request, we are going to run it again.

Integrated and CPU Gaming

We have recently automated around a dozen games at four different performance levels. A good number of games will have frame time data, however due to automation complications, some will not. The idea is that we get a good overview of a number of different genres and engines for testing. So far we have the following games automated:

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| World of Tanks enCore | Driving / Action | Feb 2018 |

DX11 | 768p Minimum |

1080p Medium |

1080p Ultra |

4K Ultra |

|

| Final Fantasy XV | JRPG | Mar 2018 |

DX11 | 720p Standard |

1080p Standard |

4K Standard |

8K Standard |

|

| Shadow of War | Action / RPG | Sep 2017 |

DX11 | 720p Ultra |

1080p Ultra |

4K High |

8K High |

|

| F1 2018 | Racing | Aug 2018 |

DX11 | 720p Low |

1080p Med |

4K High |

4K Ultra |

|

| Civilization VI | RTS | Oct 2016 |

DX12 | 1080p Ultra |

4K Ultra |

8K Ultra |

16K Low |

|

| Ashes: Classic | RTS | Mar 2016 |

DX12 | 720p Standard |

1080p Standard |

1440p Standard |

4K Standard |

|

| Strange Brigade* | FPS | Aug 2018 |

DX12 Vulkan |

720p Low |

1080p Medium |

1440p High |

4K Ultra |

|

| Shadow of the Tomb Raider | Action | Sep 2018 |

DX12 | 720p Low |

1080p Medium |

1440p High |

4K Highest |

|

| Grand Theft Auto V | Open World | Apr 2015 |

DX11 | 720p Low |

1080p High |

1440p Very High |

4K Ultra |

|

| Far Cry 5 | FPS | Mar 2018 |

DX11 | 720p Low |

1080p Normal |

1440p High |

4K Ultra |

|

| *Strange Brigade is run in DX12 and Vulkan modes | ||||||||

For our CPU Gaming tests, we will be running on an NVIDIA GTX 1080. For the CPU benchmarks, we use an RX460 as we now have several units for concurrent testing.

In previous years we tested multiple GPUs on a small number of games – this time around, due to a Twitter poll I did which turned out exactly 50:50, we are doing it the other way around: more games, fewer GPUs.

Scale Up vs Scale Out: Benefits of Automation

One comment we get every now and again is that automation isn’t the best way of testing – there’s a higher barrier to entry, and it limits the tests that can be done. From our perspective, despite taking a little while to program properly (and get it right), automation means we can do several things:

- Guarantee consistent breaks between tests for cooldown to occur, rather than variable cooldown times based on ‘if I’m looking at the screen’

- It allows us to simultaneously test several systems at once. I currently run five systems in my office (limited by the number of 4K monitors, and space) which means we can process more hardware at the same time

- We can leave tests to run overnight, very useful for a deadline

- With a good enough script, tests can be added very easily

Our benchmark suite collates all the results and spits out data as the tests are running to a central storage platform, which I can probe mid-run to update data as it comes through. This also acts as a mental check in case any of the data might be abnormal.

We do have one major limitation, and that rests on the side of our gaming tests. We are running multiple tests through one Steam account, some of which (like GTA) are online only. As Steam only lets one system play on an account at once, our gaming script probes Steam’s own APIs to determine if we are ‘online’ or not, and to run offline tests until the account is free to be logged in on that system. Depending on the number of games we test that absolutely require online mode, it can be a bit of a bottleneck.

Benchmark Suite Updates

As always, we do take requests. It helps us understand the workloads that everyone is running and plan accordingly.

A side note on software packages: we have had requests for tests on software such as ANSYS, or other professional grade software. The downside of testing this software is licensing and scale. Most of these companies do not particularly care about us running tests, and state it’s not part of their goals. Others, like Agisoft, are more than willing to help. If you are involved in these software packages, the best way to see us benchmark them is to reach out. We have special versions of software for some of our tests, and if we can get something that works, and relevant to the audience, then we shouldn’t have too much difficulty adding it to the suite.

CPU Performance: System Tests

Our System Test section focuses significantly on real-world testing, user experience, with a slight nod to throughput. In this section we cover application loading time, image processing, simple scientific physics, emulation, neural simulation, optimized compute, and 3D model development, with a combination of readily available and custom software. For some of these tests, the bigger suites such as PCMark do cover them (we publish those values in our office section), although multiple perspectives is always beneficial. In all our tests we will explain in-depth what is being tested, and how we are testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

Application Load: GIMP 2.10.4

One of the most important aspects about user experience and workflow is how fast does a system respond. A good test of this is to see how long it takes for an application to load. Most applications these days, when on an SSD, load fairly instantly, however some office tools require asset pre-loading before being available. Most operating systems employ caching as well, so when certain software is loaded repeatedly (web browser, office tools), then can be initialized much quicker.

In our last suite, we tested how long it took to load a large PDF in Adobe Acrobat. Unfortunately this test was a nightmare to program for, and didn’t transfer over to Win10 RS3 easily. In the meantime we discovered an application that can automate this test, and we put it up against GIMP, a popular free open-source online photo editing tool, and the major alternative to Adobe Photoshop. We set it to load a large 50MB design template, and perform the load 10 times with 10 seconds in-between each. Due to caching, the first 3-5 results are often slower than the rest, and time to cache can be inconsistent, we take the average of the last five results to show CPU processing on cached loading.

For short bursty workloads, most processors are within the same sort of margins. The slower E-2104G takes a bit longer, however.

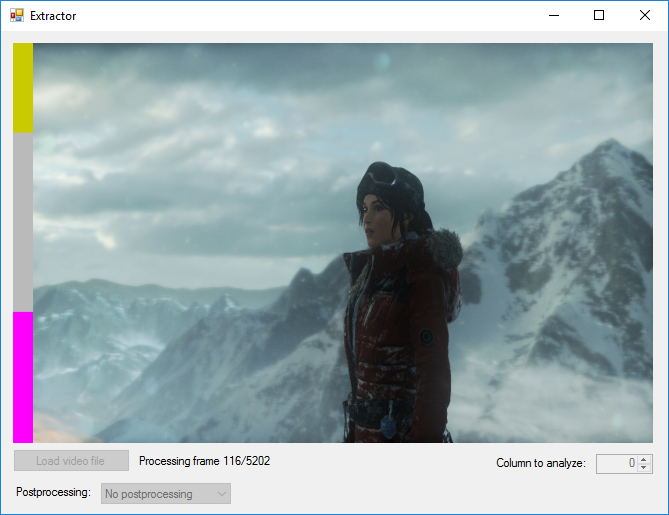

FCAT: Image Processing

The FCAT software was developed to help detect microstuttering, dropped frames, and run frames in graphics benchmarks when two accelerators were paired together to render a scene. Due to game engines and graphics drivers, not all GPU combinations performed ideally, which led to this software fixing colors to each rendered frame and dynamic raw recording of the data using a video capture device.

The FCAT software takes that recorded video, which in our case is 90 seconds of a 1440p run of Rise of the Tomb Raider, and processes that color data into frame time data so the system can plot an ‘observed’ frame rate, and correlate that to the power consumption of the accelerators. This test, by virtue of how quickly it was put together, is single threaded. We run the process and report the time to completion.

With a longer sustained single thread workload, there's not much in most of the processors selected.

3D Particle Movement v2.1: Brownian Motion

Our 3DPM test is a custom built benchmark designed to simulate six different particle movement algorithms of points in a 3D space. The algorithms were developed as part of my PhD., and while ultimately perform best on a GPU, provide a good idea on how instruction streams are interpreted by different microarchitectures.

A key part of the algorithms is the random number generation – we use relatively fast generation which ends up implementing dependency chains in the code. The upgrade over the naïve first version of this code solved for false sharing in the caches, a major bottleneck. We are also looking at AVX2 and AVX512 versions of this benchmark for future reviews.

For this test, we run a stock particle set over the six algorithms for 20 seconds apiece, with 10 second pauses, and report the total rate of particle movement, in millions of operations (movements) per second. We have a non-AVX version and an AVX version, with the latter implementing AVX512 and AVX2 where possible.

3DPM v2.1 can be downloaded from our server: 3DPMv2.1.rar (13.0 MB)

All the Xeon E-2100 family has AVX2, which gives a doubling of performance over non-AVX code.

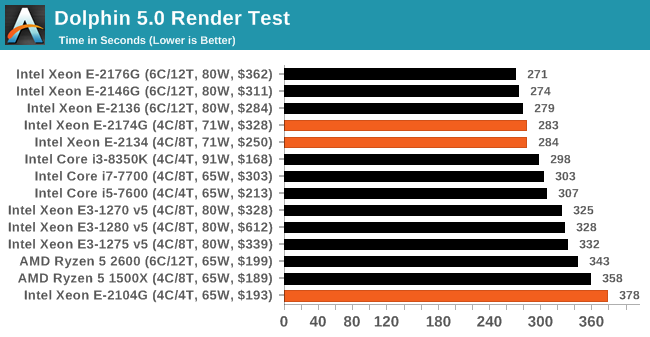

Dolphin 5.0: Console Emulation

One of the popular requested tests in our suite is to do with console emulation. Being able to pick up a game from an older system and run it as expected depends on the overhead of the emulator: it takes a significantly more powerful x86 system to be able to accurately emulate an older non-x86 console, especially if code for that console was made to abuse certain physical bugs in the hardware.

For our test, we use the popular Dolphin emulation software, and run a compute project through it to determine how close to a standard console system our processors can emulate. In this test, a Nintendo Wii would take around 1050 seconds.

The latest version of Dolphin can be downloaded from https://dolphin-emu.org/

Similar to the GIMP loading test, the higher valued parts get similar scores, whereas the E-2104G lags behind.

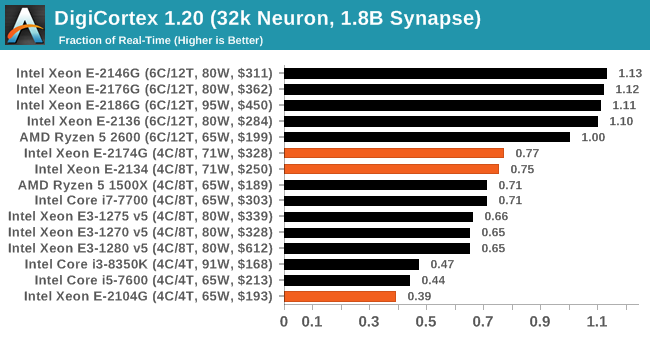

DigiCortex 1.20: Sea Slug Brain Simulation

This benchmark was originally designed for simulation and visualization of neuron and synapse activity, as is commonly found in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron / 1.8B synapse simulation, equivalent to a Sea Slug.

Example of a 2.1B neuron simulation

We report the results as the ability to simulate the data as a fraction of real-time, so anything above a ‘one’ is suitable for real-time work. Out of the two modes, a ‘non-firing’ mode which is DRAM heavy and a ‘firing’ mode which has CPU work, we choose the latter. Despite this, the benchmark is still affected by DRAM speed a fair amount.

DigiCortex can be downloaded from http://www.digicortex.net/

DigiCortex does well with additional threads here, showing an almost 2x jump when enabling hyperthreading.

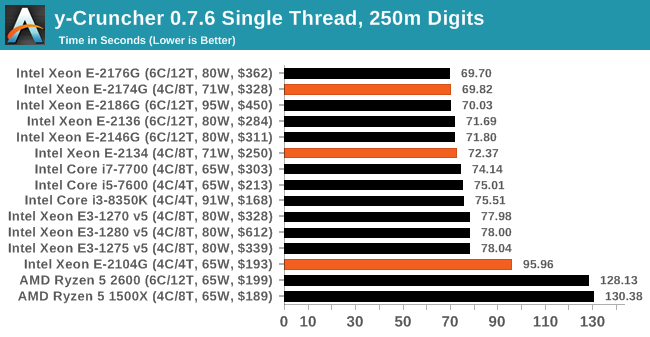

y-Cruncher v0.7.6: Microarchitecture Optimized Compute

I’ve known about y-Cruncher for a while, as a tool to help compute various mathematical constants, but it wasn’t until I began talking with its developer, Alex Yee, a researcher from NWU and now software optimization developer, that I realized that he has optimized the software like crazy to get the best performance. Naturally, any simulation that can take 20+ days can benefit from a 1% performance increase! Alex started y-cruncher as a high-school project, but it is now at a state where Alex is keeping it up to date to take advantage of the latest instruction sets before they are even made available in hardware.

For our test we run y-cruncher v0.7.6 through all the different optimized variants of the binary, single threaded and multi-threaded, including the AVX-512 optimized binaries. The test is to calculate 250m digits of Pi, and we use the single threaded and multi-threaded versions of this test.

Users can download y-cruncher from Alex’s website: http://www.numberworld.org/y-cruncher/

In single thread mode, we again see the jump from the slower E-2104G to the two faster parts. Given both of the faster parts have similar all-core turbos, we see that in MT mode they perfom equally.

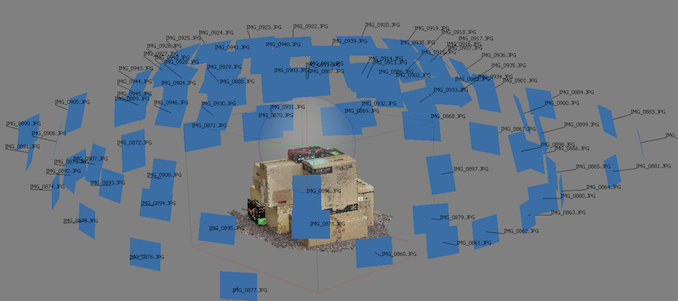

Agisoft Photoscan 1.3.3: 2D Image to 3D Model Conversion

One of the ISVs that we have worked with for a number of years is Agisoft, who develop software called PhotoScan that transforms a number of 2D images into a 3D model. This is an important tool in model development and archiving, and relies on a number of single threaded and multi-threaded algorithms to go from one side of the computation to the other.

In our test, we take v1.3.3 of the software with a good sized data set of 84 x 18 megapixel photos and push it through a reasonably fast variant of the algorithms, but is still more stringent than our 2017 test. We report the total time to complete the process.

Agisoft’s Photoscan website can be found here: http://www.agisoft.com/

CPU Performance: Rendering Tests

Rendering is often a key target for processor workloads, lending itself to a professional environment. It comes in different formats as well, from 3D rendering through rasterization, such as games, or by ray tracing, and invokes the ability of the software to manage meshes, textures, collisions, aliasing, physics (in animations), and discarding unnecessary work. Most renderers offer CPU code paths, while a few use GPUs and select environments use FPGAs or dedicated ASICs. For big studios however, CPUs are still the hardware of choice.

All of our benchmark results can also be found in our benchmark engine, Bench.

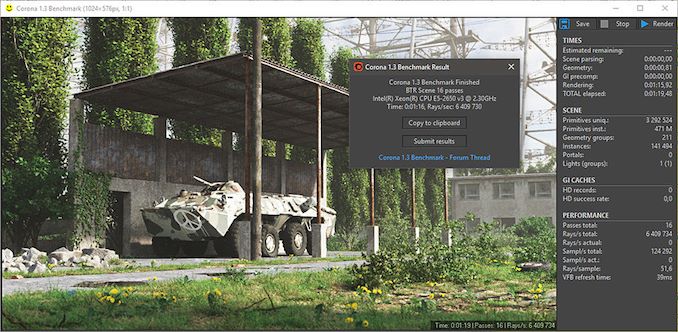

Corona 1.3: Performance Render

An advanced performance based renderer for software such as 3ds Max and Cinema 4D, the Corona benchmark renders a generated scene as a standard under its 1.3 software version. Normally the GUI implementation of the benchmark shows the scene being built, and allows the user to upload the result as a ‘time to complete’.

We got in contact with the developer who gave us a command line version of the benchmark that does a direct output of results. Rather than reporting time, we report the average number of rays per second across six runs, as the performance scaling of a result per unit time is typically visually easier to understand.

The Corona benchmark website can be found at https://corona-renderer.com/benchmark

With the rendering tests, all our quad-core Xeon E processors lose out to the respective cheaper Ryzen parts.

Blender 2.79b: 3D Creation Suite

A high profile rendering tool, Blender is open-source allowing for massive amounts of configurability, and is used by a number of high-profile animation studios worldwide. The organization recently released a Blender benchmark package, a couple of weeks after we had narrowed our Blender test for our new suite, however their test can take over an hour. For our results, we run one of the sub-tests in that suite through the command line - a standard ‘bmw27’ scene in CPU only mode, and measure the time to complete the render.

Blender can be downloaded at https://www.blender.org/download/

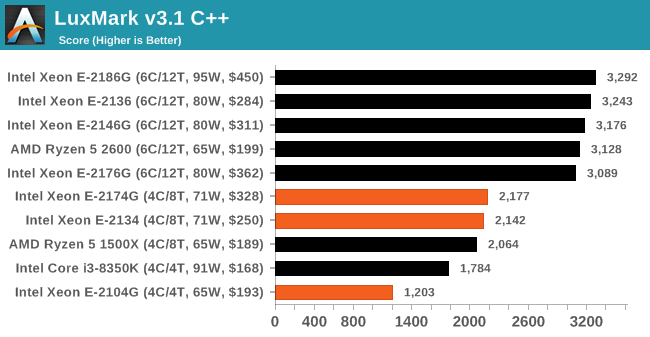

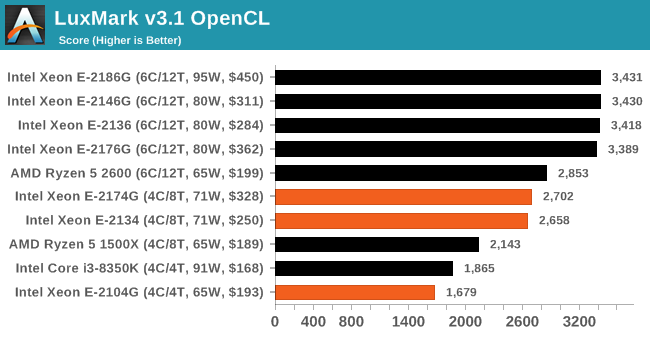

LuxMark v3.1: LuxRender via Different Code Paths

As stated at the top, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. On top of that, there are many frameworks and APIs in which to program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

In our test, we run the simple ‘Ball’ scene on both the C++ and OpenCL code paths, but in CPU mode. This scene starts with a rough render and slowly improves the quality over two minutes, giving a final result in what is essentially an average ‘kilorays per second’.

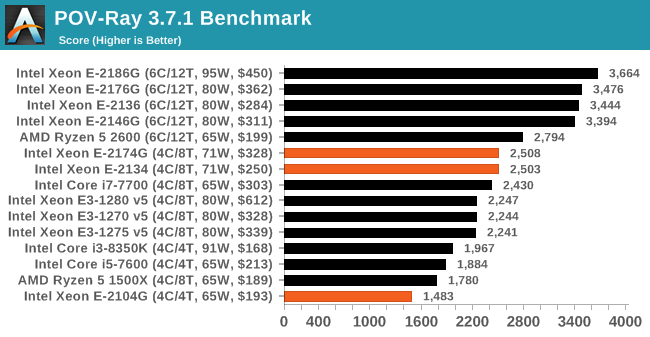

POV-Ray 3.7.1: Ray Tracing

The Persistence of Vision ray tracing engine is another well-known benchmarking tool, which was in a state of relative hibernation until AMD released its Zen processors, to which suddenly both Intel and AMD were submitting code to the main branch of the open source project. For our test, we use the built-in benchmark for all-cores, called from the command line.

POV-Ray can be downloaded from http://www.povray.org/

The much cheaper Ryzen 5 2600 here gets a good perf/$ boost over any of the quad-core Xeon E processors, and with the right system, can have ECC enabled.

CPU Performance: Office Tests

The Office test suite is designed to focus around more industry standard tests that focus on office workflows, system meetings, some synthetics, but we also bundle compiler performance in with this section. For users that have to evaluate hardware in general, these are usually the benchmarks that most consider.

All of our benchmark results can also be found in our benchmark engine, Bench.

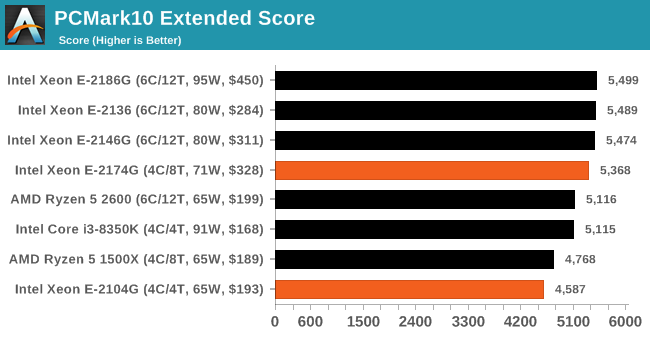

PCMark 10: Industry Standard System Profiler

Futuremark, now known as UL, has developed benchmarks that have become industry standards for around two decades. The latest complete system test suite is PCMark 10, upgrading over PCMark 8 with updated tests and more OpenCL invested into use cases such as video streaming.

PCMark splits its scores into about 14 different areas, including application startup, web, spreadsheets, photo editing, rendering, video conferencing, and physics. We post all of these numbers in our benchmark database, Bench, however the key metric for the review is the overall score.

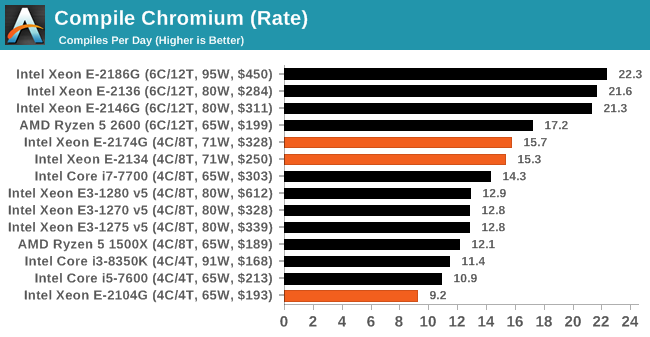

Chromium Compile: Windows VC++ Compile of Chrome 56

A large number of AnandTech readers are software engineers, looking at how the hardware they use performs. While compiling a Linux kernel is ‘standard’ for the reviewers who often compile, our test is a little more varied – we are using the windows instructions to compile Chrome, specifically a Chrome 56 build from March 2017, as that was when we built the test. Google quite handily gives instructions on how to compile with Windows, along with a 400k file download for the repo.

In our test, using Google’s instructions, we use the MSVC compiler and ninja developer tools to manage the compile. As you may expect, the benchmark is variably threaded, with a mix of DRAM requirements that benefit from faster caches. Data procured in our test is the time taken for the compile, which we convert into compiles per day.

The higher E-2174G gets a small lead over the E-2134, but in this case having more threads helps, so even the E-2136 can beat the E-2174G.

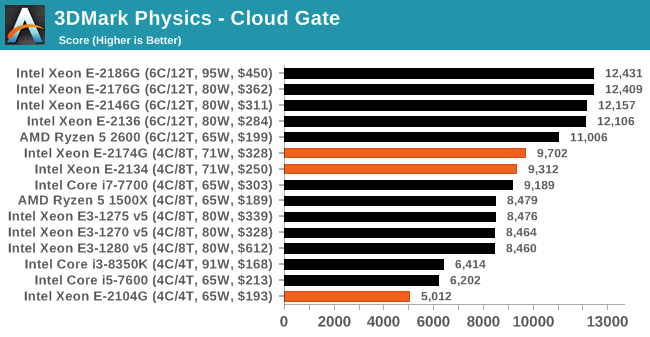

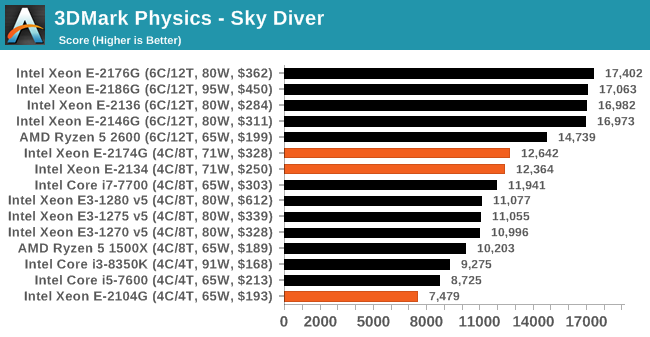

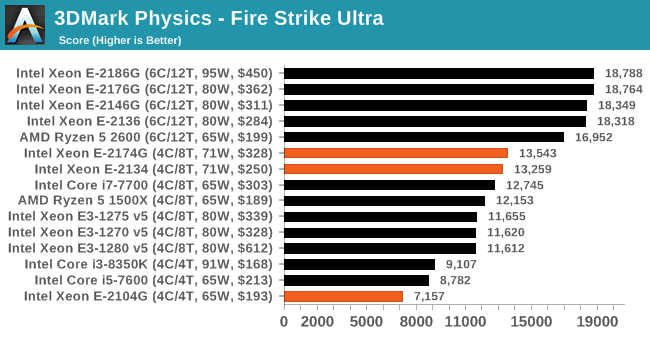

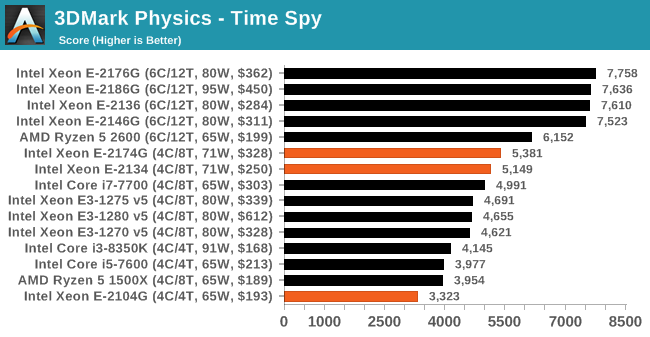

3DMark Physics: In-Game Physics Compute

Alongside PCMark is 3DMark, Futuremark’s (UL’s) gaming test suite. Each gaming tests consists of one or two GPU heavy scenes, along with a physics test that is indicative of when the test was written and the platform it is aimed at. The main overriding tests, in order of complexity, are Ice Storm, Cloud Gate, Sky Diver, Fire Strike, and Time Spy.

Some of the subtests offer variants, such as Ice Storm Unlimited, which is aimed at mobile platforms with an off-screen rendering, or Fire Strike Ultra which is aimed at high-end 4K systems with lots of the added features turned on. Time Spy also currently has an AVX-512 mode (which we may be using in the future).

For our tests, we report in Bench the results from every physics test, but for the sake of the review we keep it to the most demanding of each scene: Ice Storm Unlimited, Cloud Gate, Sky Diver, Fire Strike Ultra, and Time Spy.

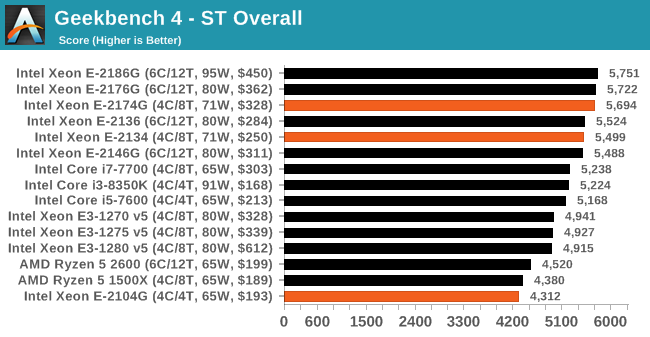

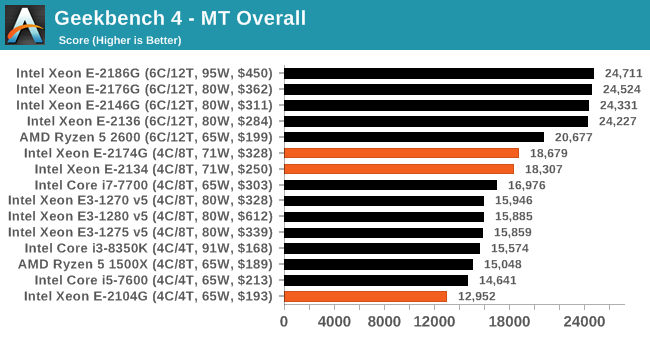

GeekBench4: Synthetics

A common tool for cross-platform testing between mobile, PC, and Mac, GeekBench 4 is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I’m including this test due to popular demand, although the results do come across as overly synthetic, and a lot of users often put a lot of weight behind the test due to the fact that it is compiled across different platforms (although with different compilers).

We record the main subtest scores (Crypto, Integer, Floating Point, Memory) in our benchmark database, but for the review we post the overall single and multi-threaded results.

CPU Performance: Encoding Tests

With the rise of streaming, vlogs, and video content as a whole, encoding and transcoding tests are becoming ever more important. Not only are more home users and gamers needing to convert video files into something more manageable, for streaming or archival purposes, but the servers that manage the output also manage around data and log files with compression and decompression. Our encoding tasks are focused around these important scenarios, with input from the community for the best implementation of real-world testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

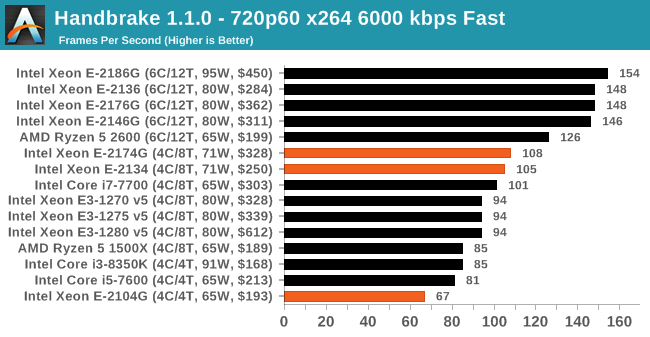

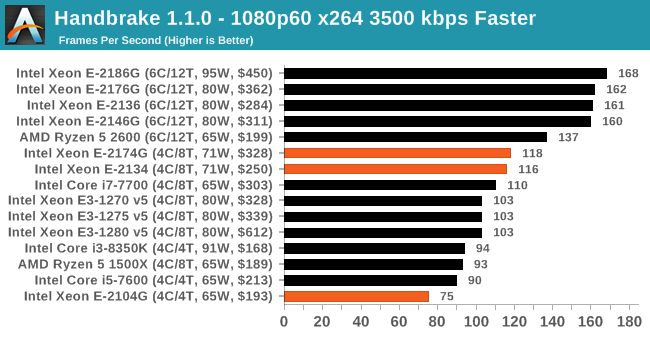

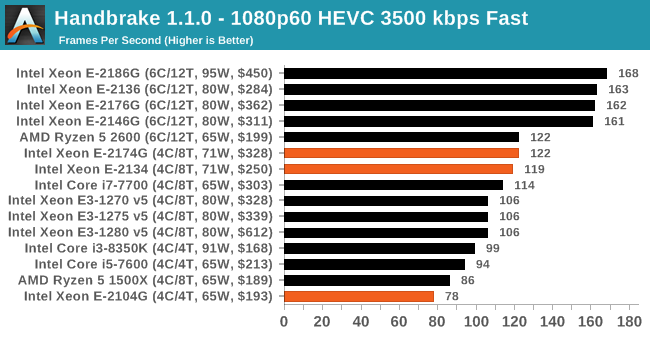

Handbrake 1.1.0: Streaming and Archival Video Transcoding

A popular open source tool, Handbrake is the anything-to-anything video conversion software that a number of people use as a reference point. The danger is always on version numbers and optimization, for example the latest versions of the software can take advantage of AVX-512 and OpenCL to accelerate certain types of transcoding and algorithms. The version we use here is a pure CPU play, with common transcoding variations.

We have split Handbrake up into several tests, using a Logitech C920 1080p60 native webcam recording (essentially a streamer recording), and convert them into two types of streaming formats and one for archival. The output settings used are:

- 720p60 at 6000 kbps constant bit rate, fast setting, high profile

- 1080p60 at 3500 kbps constant bit rate, faster setting, main profile

- 1080p60 HEVC at 3500 kbps variable bit rate, fast setting, main profile

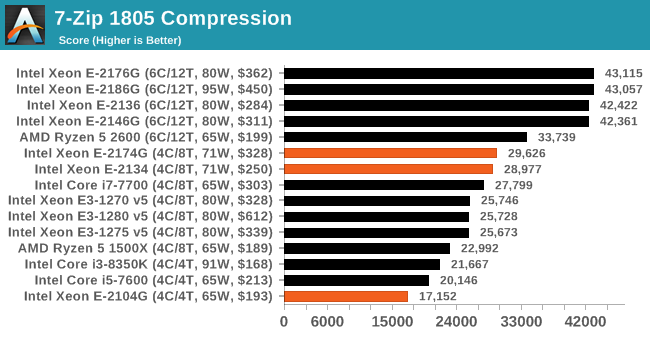

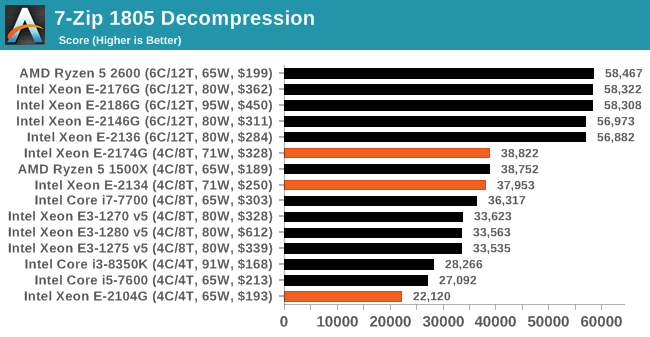

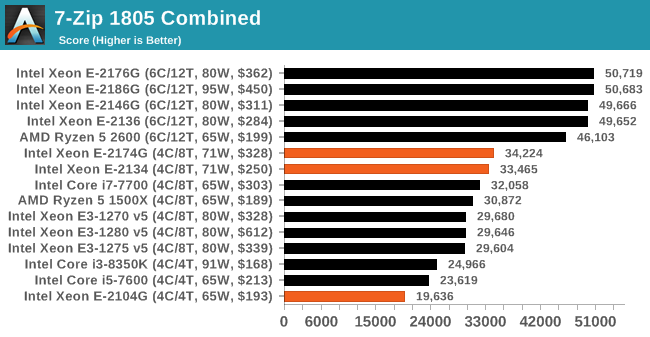

7-zip v1805: Popular Open-Source Encoding Engine

Out of our compression/decompression tool tests, 7-zip is the most requested and comes with a built-in benchmark. For our test suite, we’ve pulled the latest version of the software and we run the benchmark from the command line, reporting the compression, decompression, and a combined score.

It is noted in this benchmark that the latest multi-die processors have very bi-modal performance between compression and decompression, performing well in one and badly in the other. There are also discussions around how the Windows Scheduler is implementing every thread. As we get more results, it will be interesting to see how this plays out.

Please note, if you plan to share out the Compression graph, please include the Decompression one. Otherwise you’re only presenting half a picture.

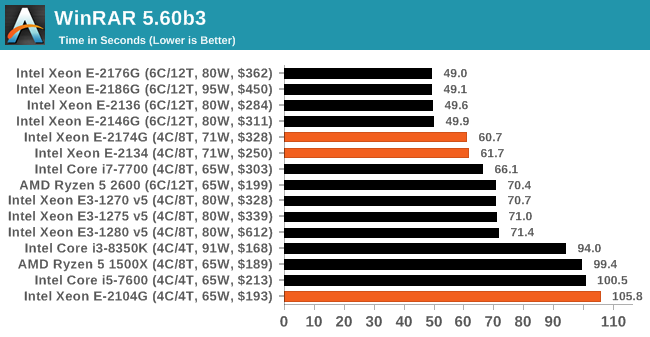

WinRAR 5.60b3: Archiving Tool

My compression tool of choice is often WinRAR, having been one of the first tools a number of my generation used over two decades ago. The interface has not changed much, although the integration with Windows right click commands is always a plus. It has no in-built test, so we run a compression over a set directory containing over thirty 60-second video files and 2000 small web-based files at a normal compression rate.

WinRAR is variable threaded but also susceptible to caching, so in our test we run it 10 times and take the average of the last five, leaving the test purely for raw CPU compute performance.

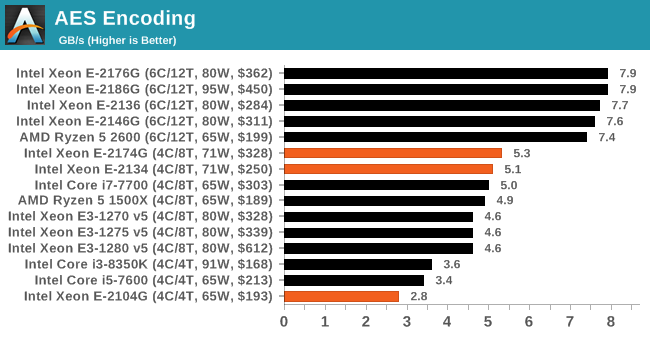

AES Encryption: File Security

A number of platforms, particularly mobile devices, are now offering encryption by default with file systems in order to protect the contents. Windows based devices have these options as well, often applied by BitLocker or third-party software. In our AES encryption test, we used the discontinued TrueCrypt for its built-in benchmark, which tests several encryption algorithms directly in memory.

The data we take for this test is the combined AES encrypt/decrypt performance, measured in gigabytes per second. The software does use AES commands for processors that offer hardware selection, however not AVX-512.

CPU Performance: Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

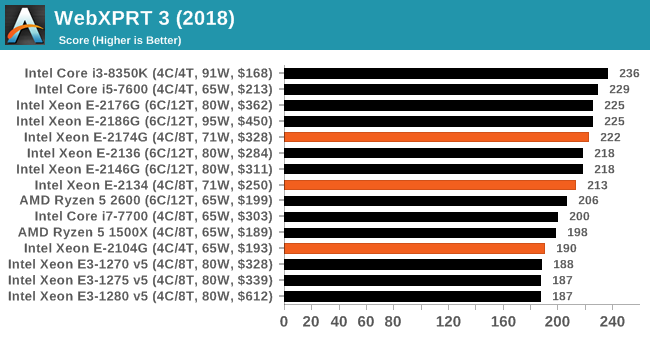

WebXPRT 3: Modern Real-World Web Tasks, including AI

The company behind the XPRT test suites, Principled Technologies, has recently released the latest web-test, and rather than attach a year to the name have just called it ‘3’. This latest test (as we started the suite) has built upon and developed the ethos of previous tests: user interaction, office compute, graph generation, list sorting, HTML5, image manipulation, and even goes as far as some AI testing.

For our benchmark, we run the standard test which goes through the benchmark list seven times and provides a final result. We run this standard test four times, and take an average.

Users can access the WebXPRT test at http://principledtechnologies.com/benchmarkxprt/webxprt/

WebXPRT 2015: HTML5 and Javascript Web UX Testing

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks that are in use today. This is still a relevant test, especially for users interacting with not-the-latest web applications in the market, of which there are a lot. Web framework development is often very quick but with high turnover, meaning that frameworks are quickly developed, built-upon, used, and then developers move on to the next, and adjusting an application to a new framework is a difficult arduous task, especially with rapid development cycles. This leaves a lot of applications as ‘fixed-in-time’, and relevant to user experience for many years.

Similar to WebXPRT3, the main benchmark is a sectional run repeated seven times, with a final score. We repeat the whole thing four times, and average those final scores.

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

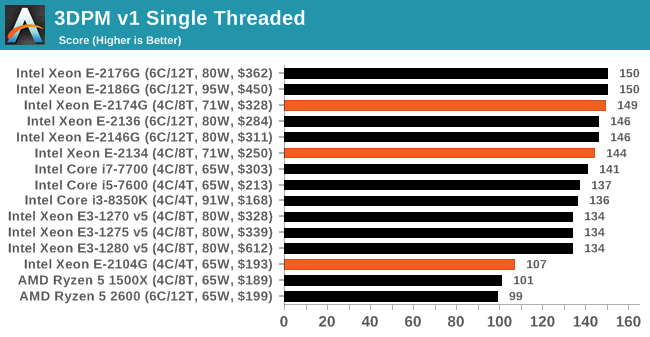

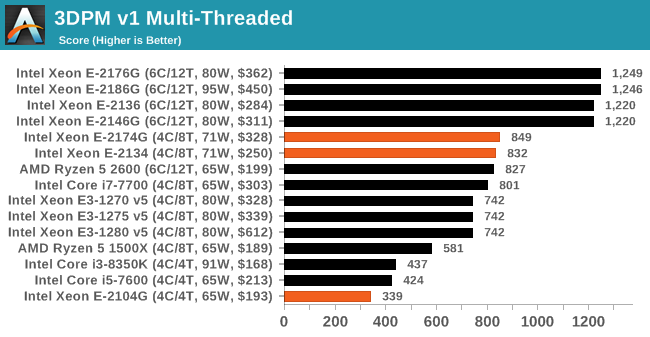

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

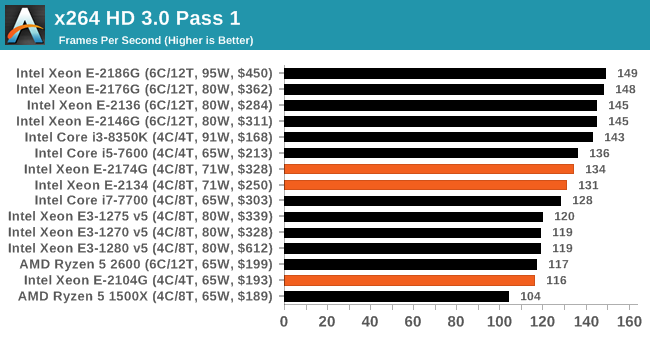

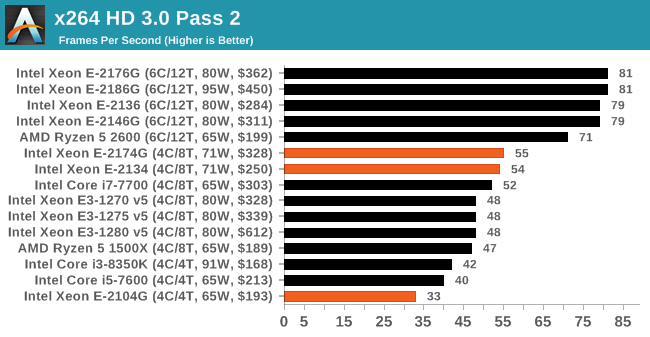

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

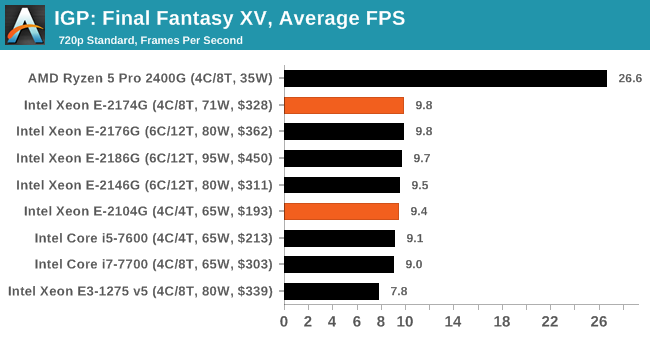

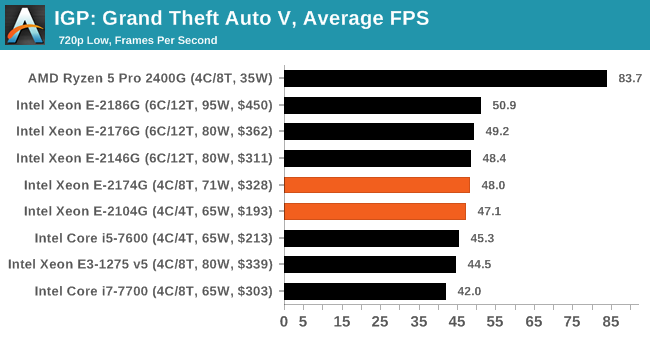

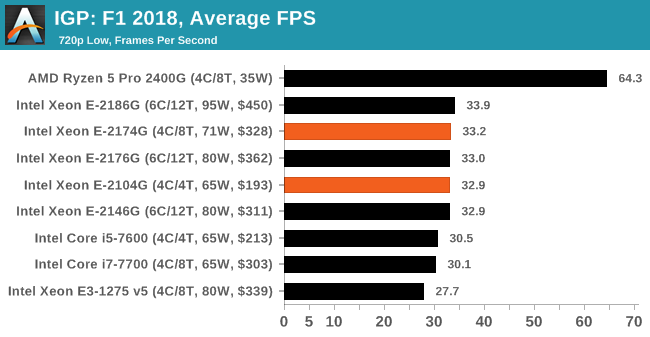

Gaming: Integrated Graphics

Despite being the ultimate joke at any bring-your-own-computer event, gaming on integrated graphics can ultimately be as rewarding as the latest mega-rig that costs the same as a car. The desire for strong integrated graphics in various shapes and sizes has waxed and waned over the years, with Intel relying on its latest ‘Gen’ graphics architecture while AMD happily puts its Vega architecture into the market to swallow up all the low-end graphics card sales. With Intel poised to make an attack on graphics in the next few years, it will be interesting to see how the graphics market develops, especially integrated graphics.

| AnandTech CPU Gaming 2019 Game List | |||||

| Game | Genre | Release Date | API | IGP | |

| Final Fantasy XV | JRPG | Mar 2018 | DX11 | 720p Standard | |

| F1 2018 | Racing | Aug 2018 | DX11 | 720p Low | |

| Grand Theft Auto V | Open World | Apr 2015 | DX11 | 720p Low | |

For our integrated graphics testing, we take our ‘IGP’ category settings for each game and loop the benchmark round for five minutes a piece, taking as much data as we can from our automated setup.

All of the Xeon E processors have Intel Graphics UHD P630 enabled, and so score about the same in all our integrated tests. However, these are all far behind AMD's Pro APUs that offer up to double the performance. Intel has not pushed much for integrated graphics performance in recent generations.

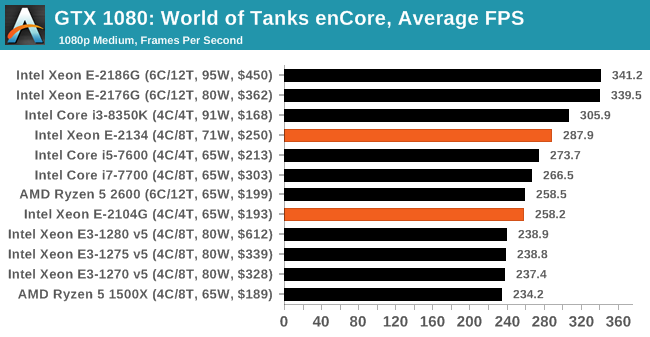

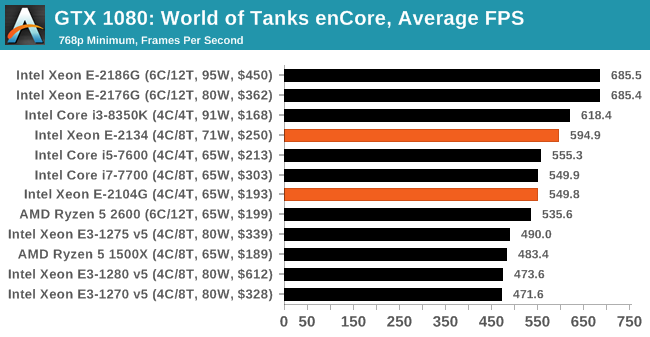

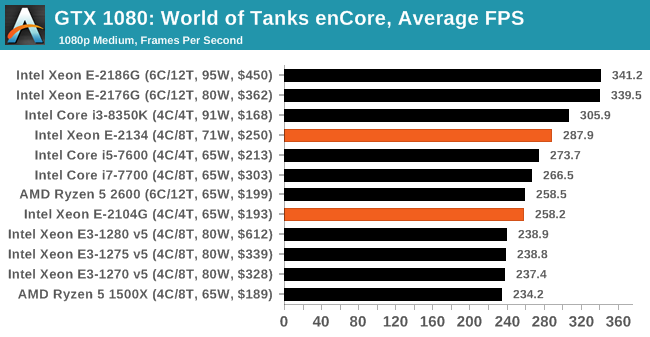

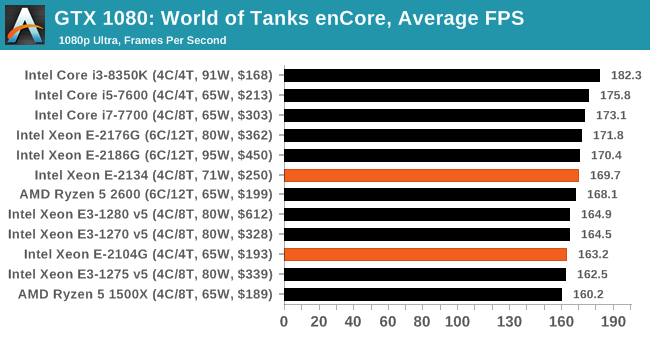

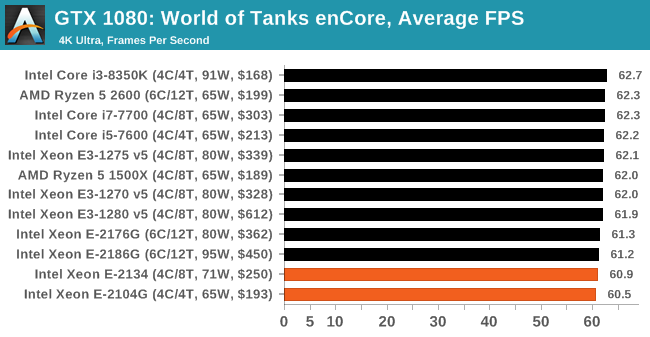

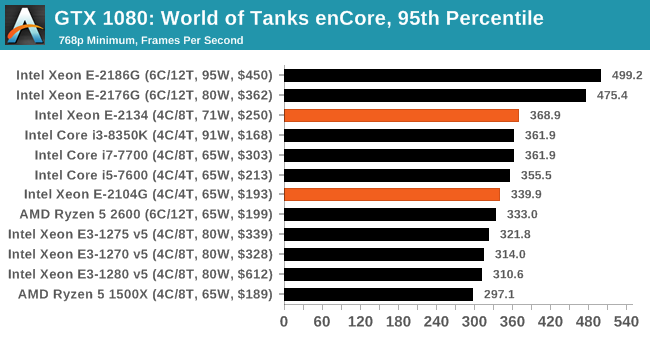

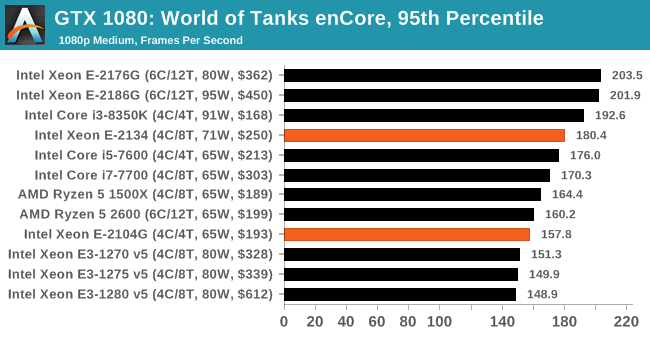

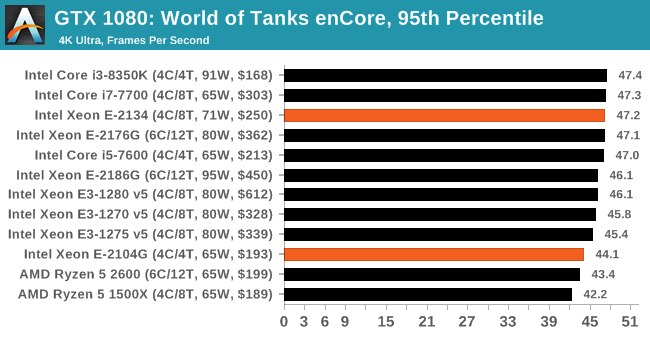

Gaming: World of Tanks enCore

Albeit different to most of the other commonly played MMO or massively multiplayer online games, World of Tanks is set in the mid-20th century and allows players to take control of a range of military based armored vehicles. World of Tanks (WoT) is developed and published by Wargaming who are based in Belarus, with the game’s soundtrack being primarily composed by Belarusian composer Sergey Khmelevsky. The game offers multiple entry points including a free-to-play element as well as allowing players to pay a fee to open up more features. One of the most interesting things about this tank based MMO is that it achieved eSports status when it debuted at the World Cyber Games back in 2012.

World of Tanks enCore is a demo application for a new and unreleased graphics engine penned by the Wargaming development team. Over time the new core engine will implemented into the full game upgrading the games visuals with key elements such as improved water, flora, shadows, lighting as well as other objects such as buildings. The World of Tanks enCore demo app not only offers up insight into the impending game engine changes, but allows users to check system performance to see if the new engine run optimally on their system.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| World of Tanks enCore | Driving / Action | Feb 2018 |

DX11 | 768p Minimum |

1080p Medium |

1080p Ultra |

4K Ultra |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

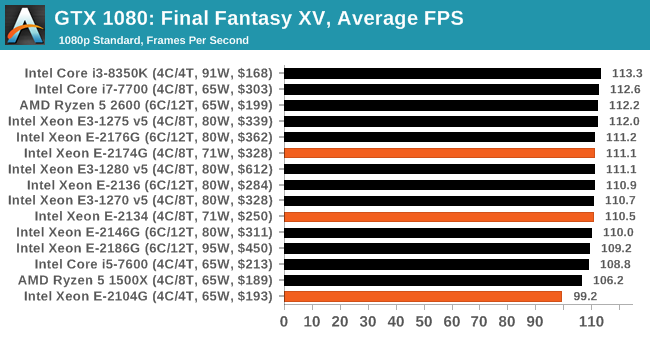

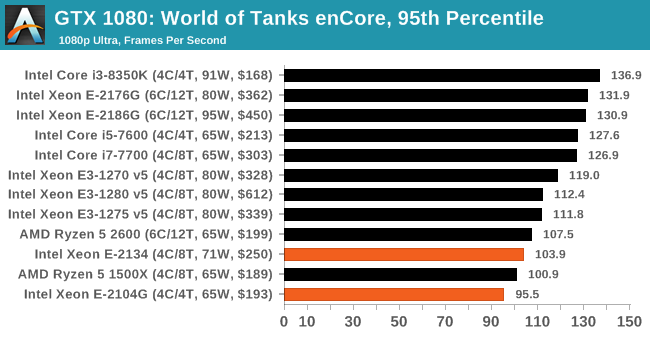

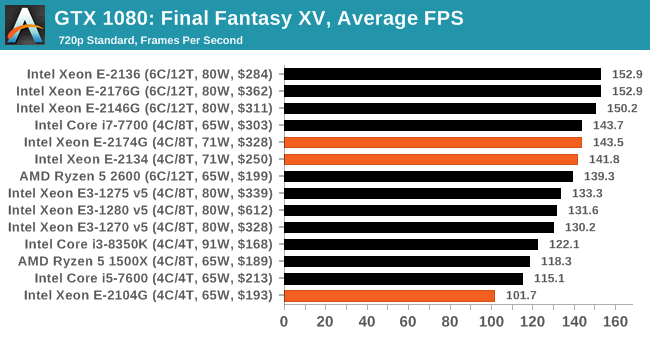

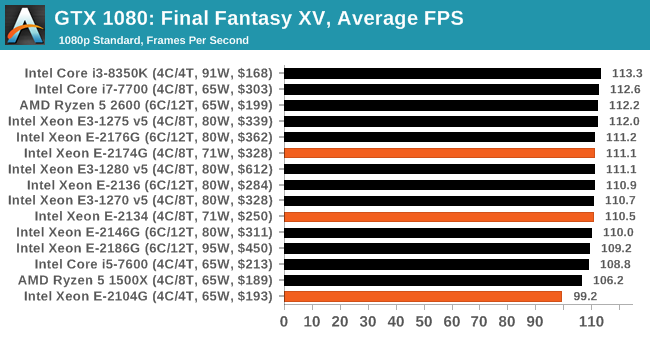

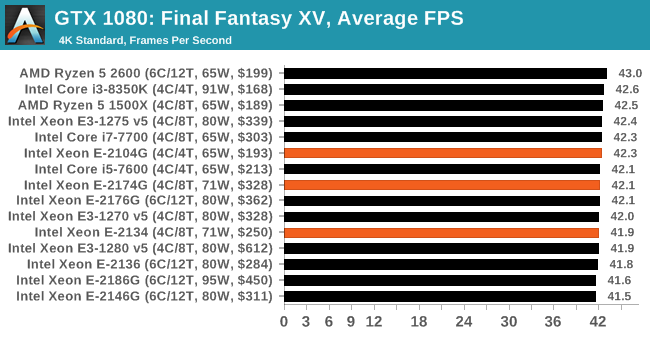

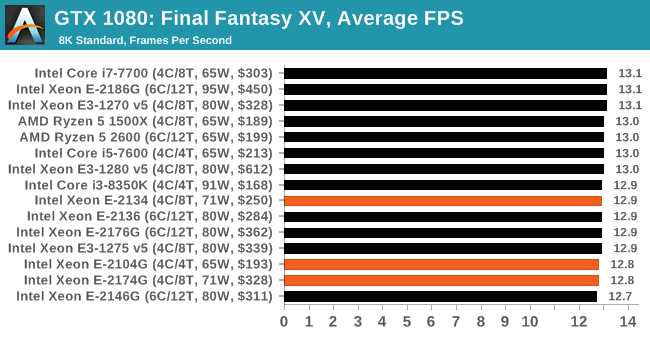

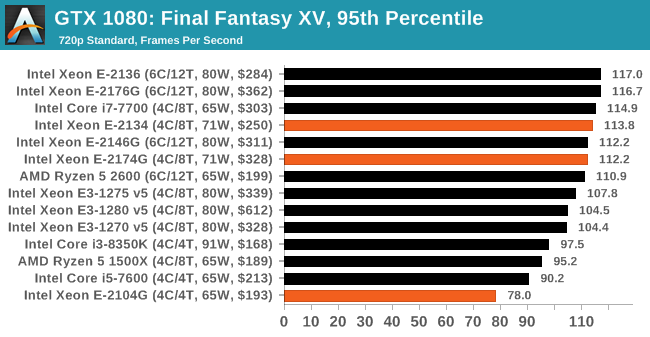

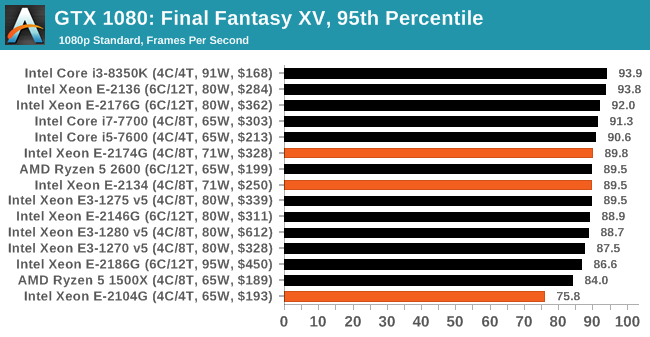

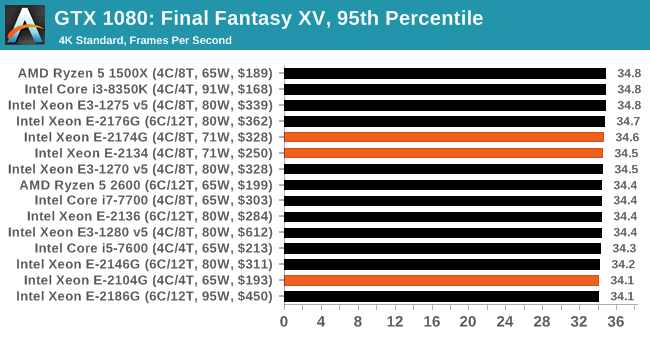

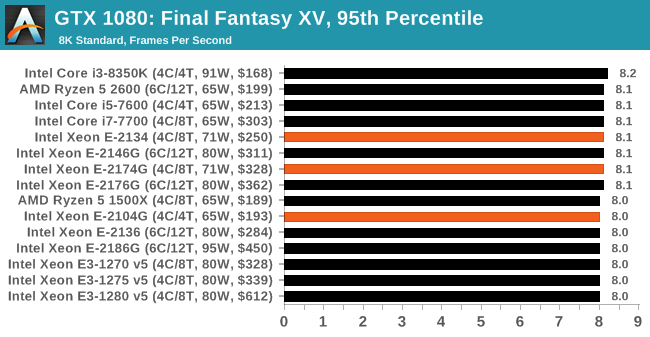

Gaming: Final Fantasy XV

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to record, although it should be noted that its heavy use of NVIDIA technology means that the Maximum setting has problems - it renders items off screen. To get around this, we use the standard preset which does not have these issues.

Square Enix has patched the benchmark with custom graphics settings and bugfixes to be much more accurate in profiling in-game performance and graphical options. For our testing, we run the standard benchmark with a FRAPs overlay, taking a 6 minute recording of the test.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Final Fantasy XV | JRPG | Mar 2018 |

DX11 | 720p Standard |

1080p Standard |

4K Standard |

8K Standard |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

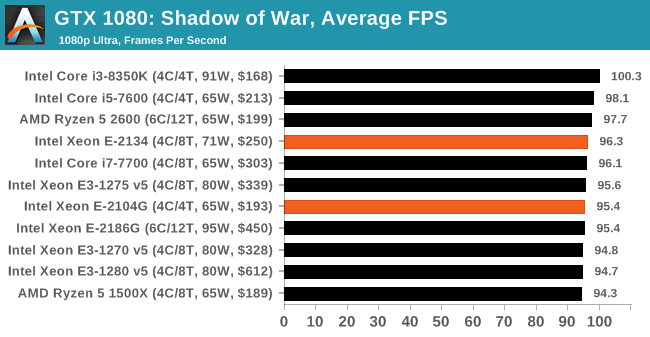

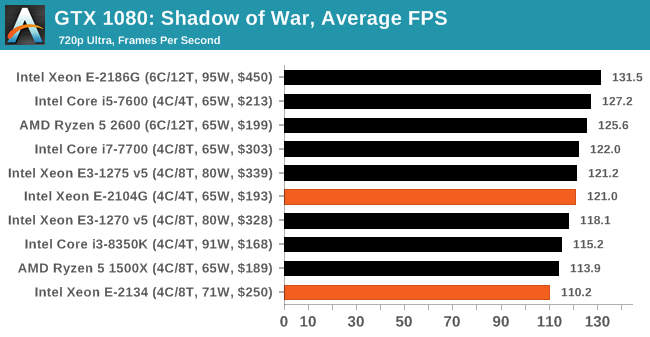

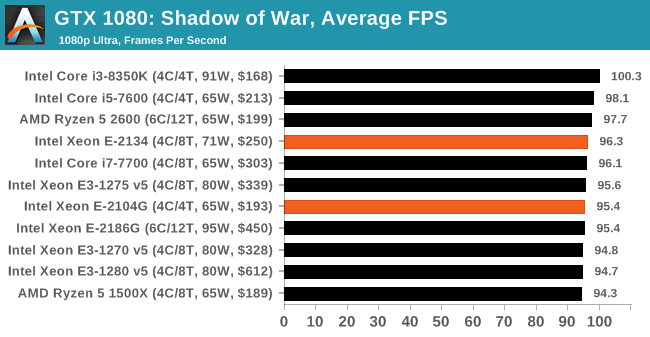

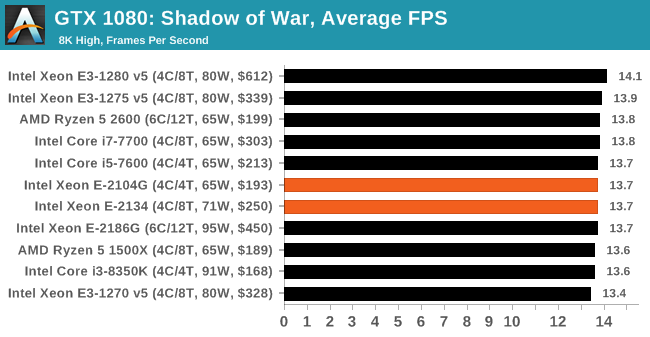

Gaming: Shadow of War

Next up is Middle-earth: Shadow of War, the sequel to Shadow of Mordor. Developed by Monolith, whose last hit was arguably F.E.A.R., Shadow of Mordor returned them to the spotlight with an innovative NPC rival generation and interaction system called the Nemesis System, along with a storyline based on J.R.R. Tolkien's legendarium, and making it work on a highly modified engine that originally powered F.E.A.R. in 2005.

Using the new LithTech Firebird engine, Shadow of War improves on the detail and complexity, and with free add-on high-resolution texture packs, offers itself as a good example of getting the most graphics out of an engine that may not be bleeding edge. Shadow of War also supports HDR (HDR10).

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Shadow of War | Action / RPG | Sep 2017 |

DX11 | 720p Ultra |

1080p Ultra |

4K High |

8K High |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

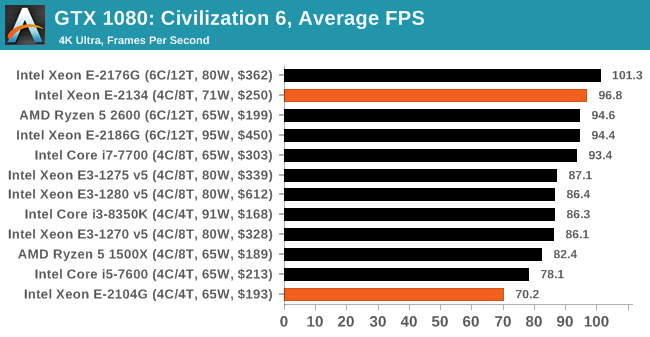

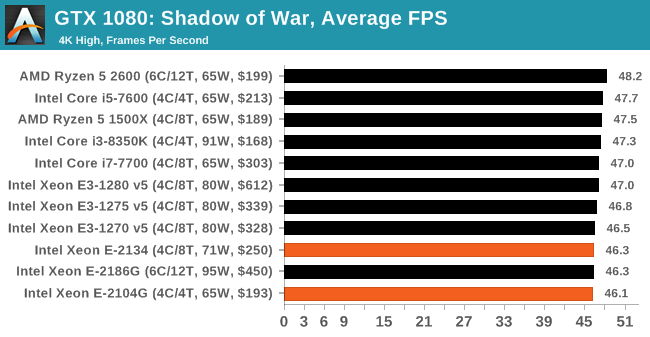

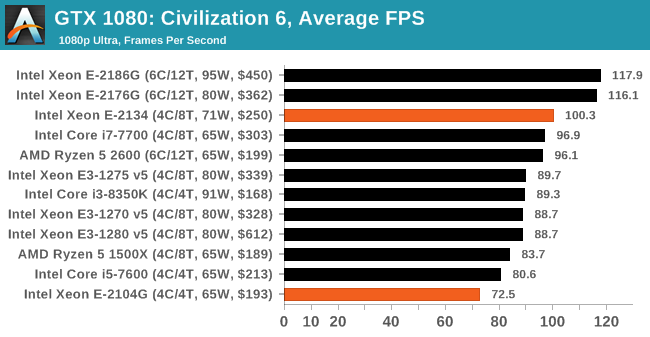

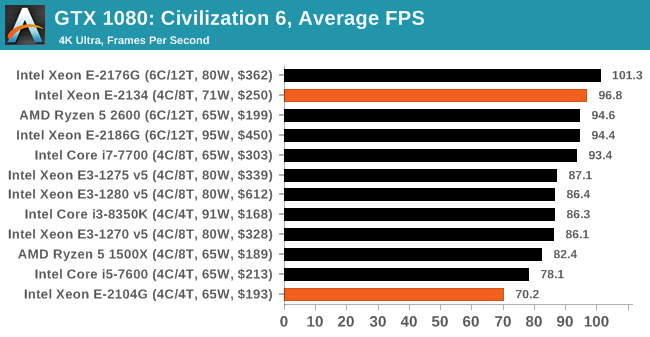

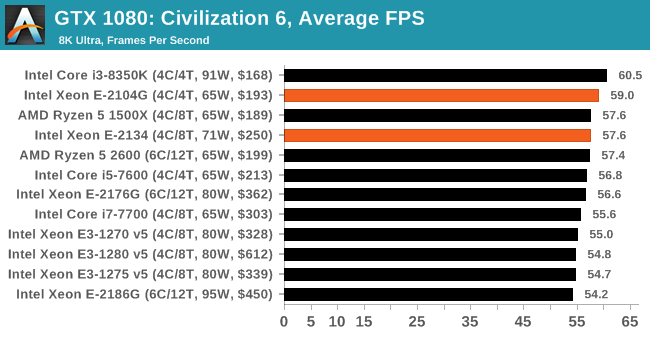

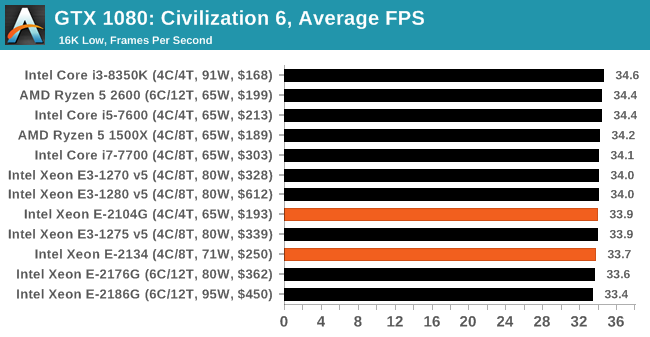

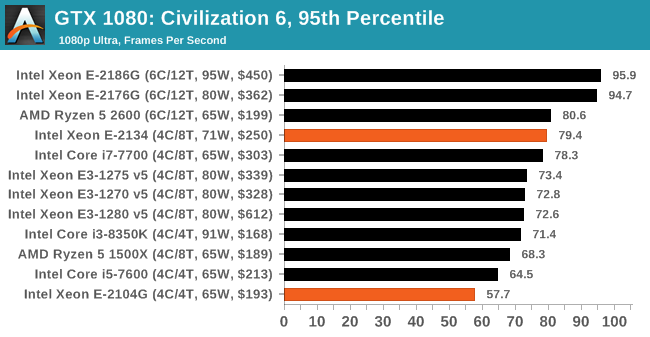

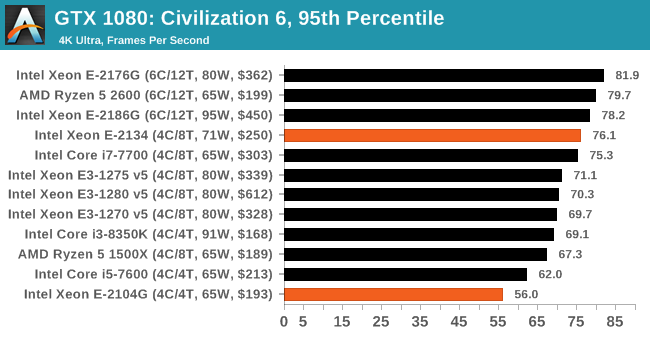

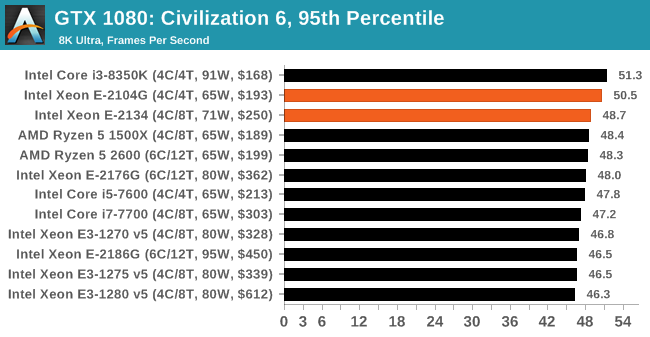

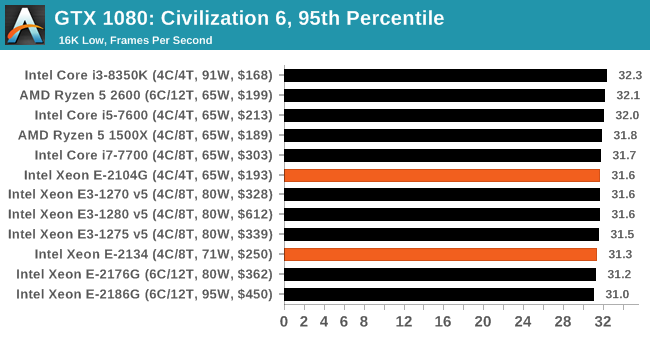

Gaming: Civilization 6 (DX12)

Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Civilization VI | RTS | Oct 2016 |

DX12 | 1080p Ultra |

4K Ultra |

8K Ultra |

16K Low |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

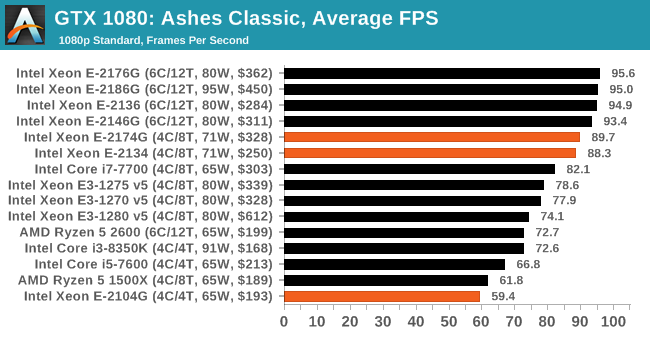

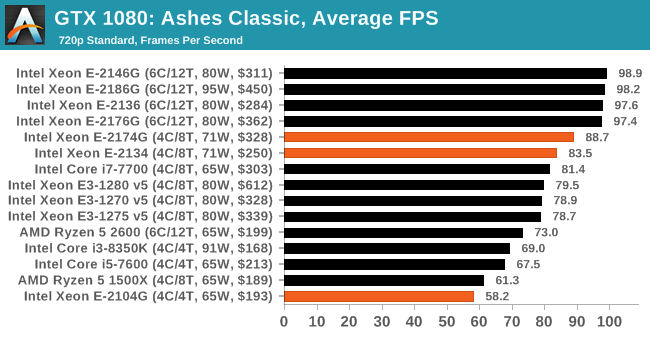

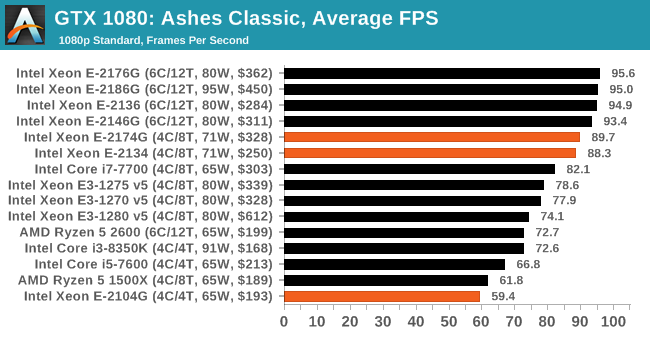

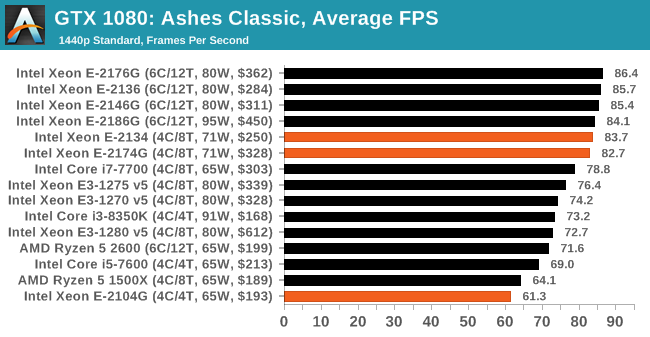

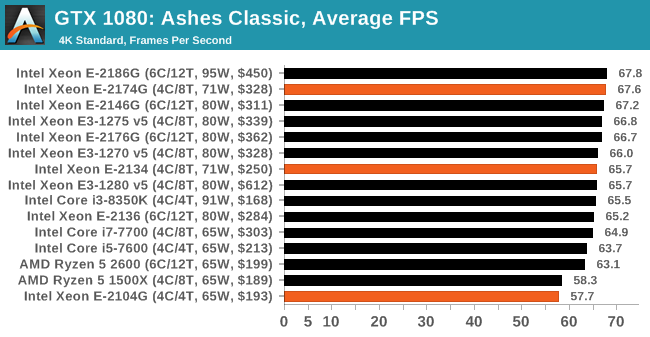

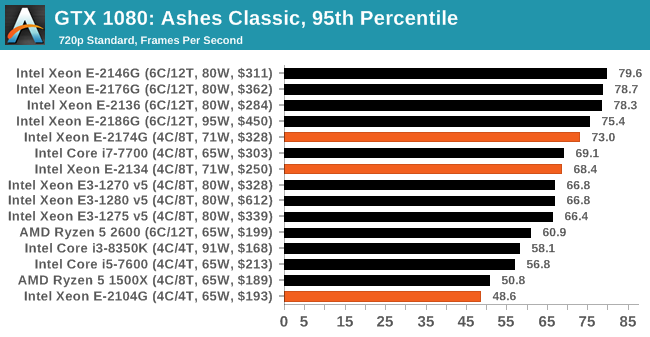

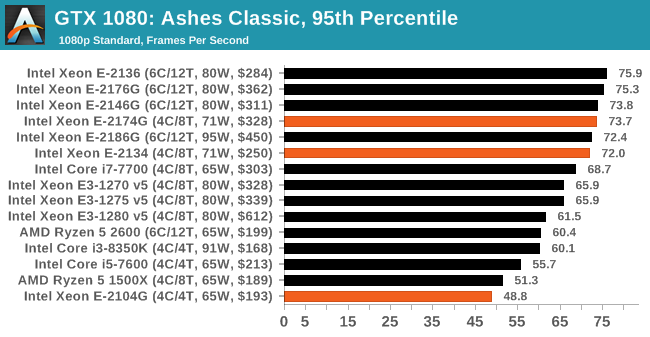

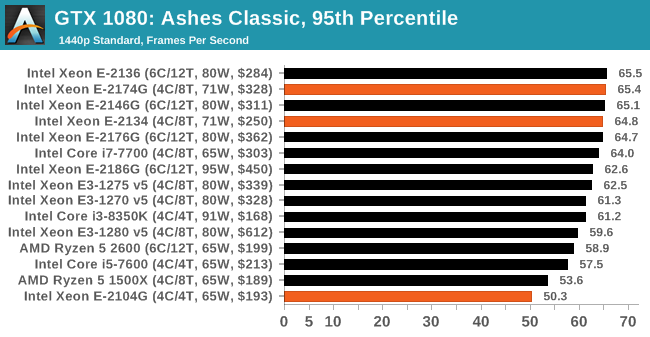

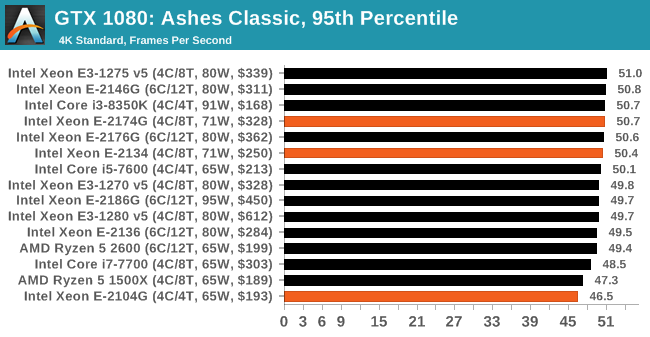

Gaming: Ashes Classic (DX12)

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of the DirectX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run Ashes Classic: an older version of the game before the Escalation update. The reason for this is that this is easier to automate, without a splash screen, but still has a strong visual fidelity to test.

Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at the above settings, and take the frame-time output for our average and percentile numbers.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Ashes: Classic | RTS | Mar 2016 |

DX12 | 720p Standard |

1080p Standard |

1440p Standard |

4K Standard |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

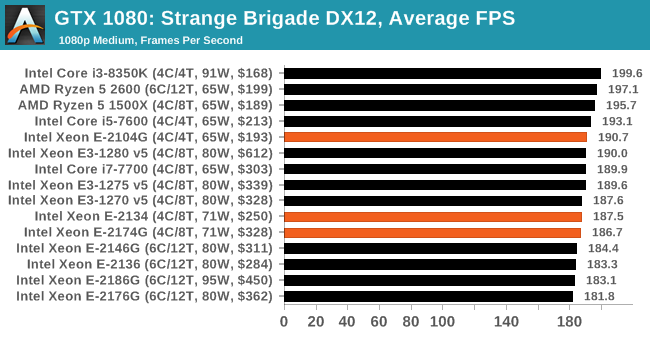

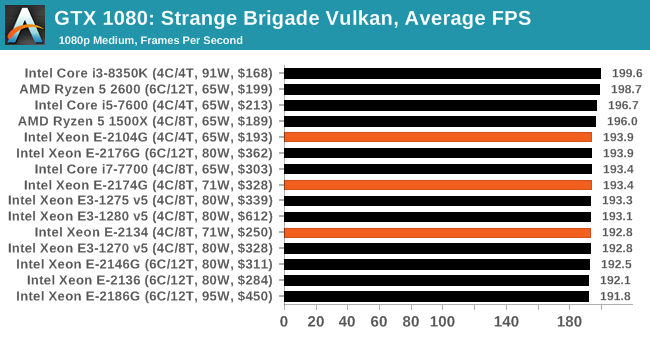

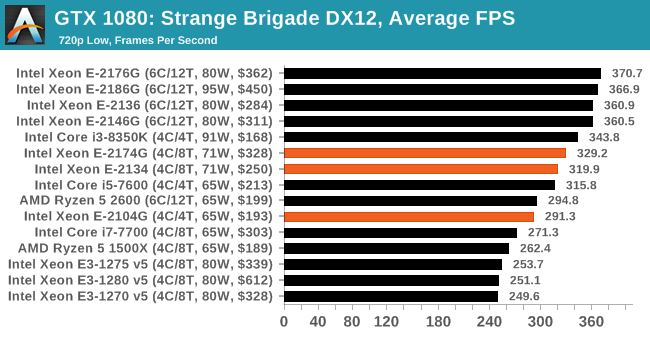

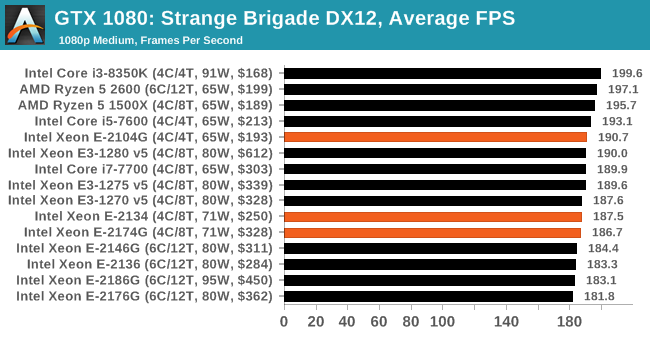

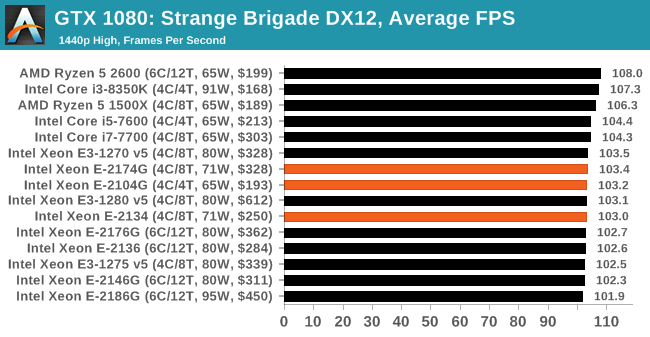

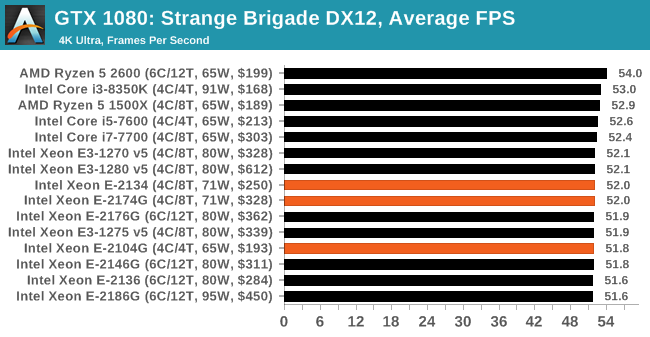

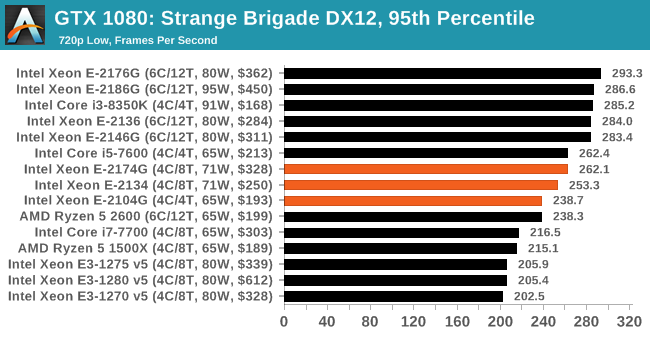

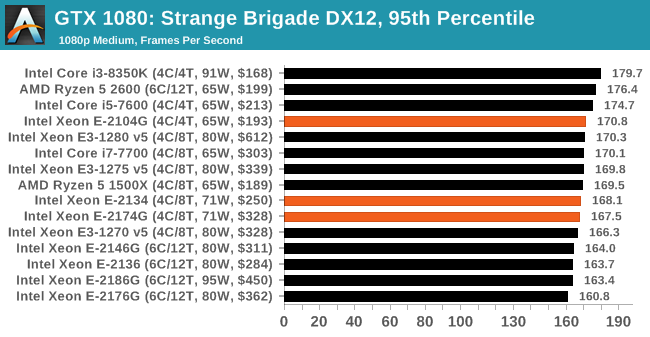

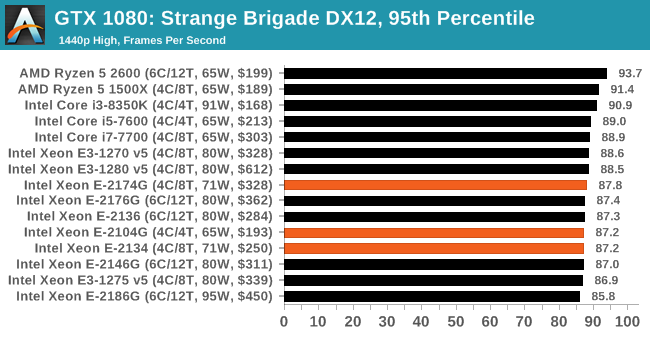

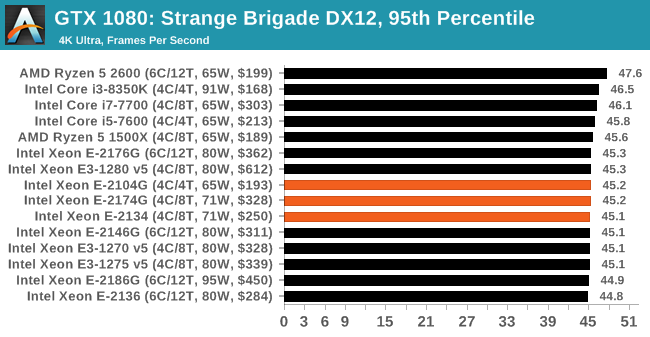

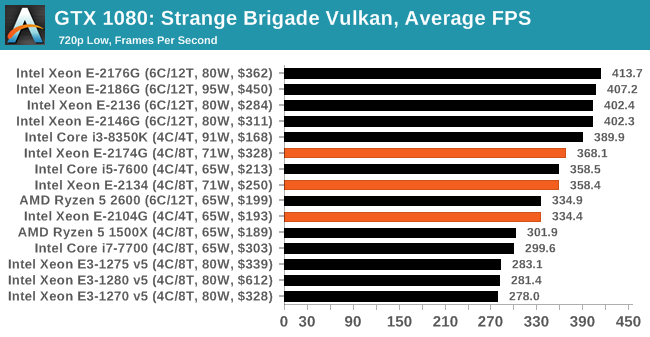

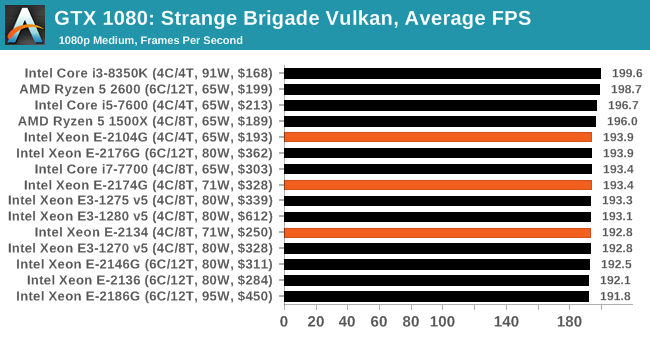

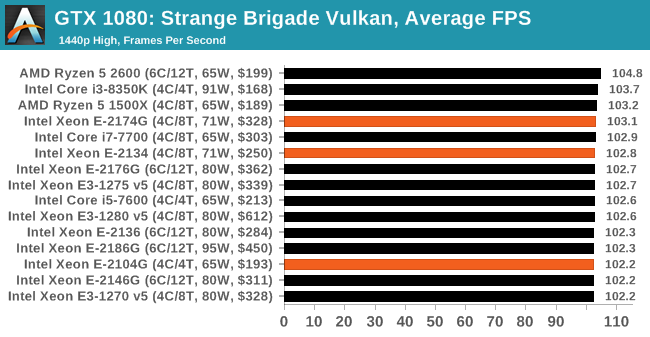

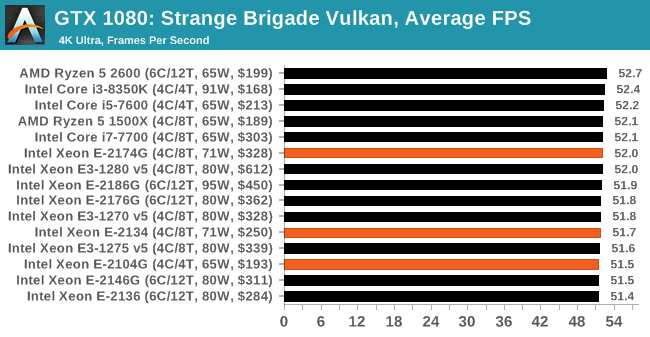

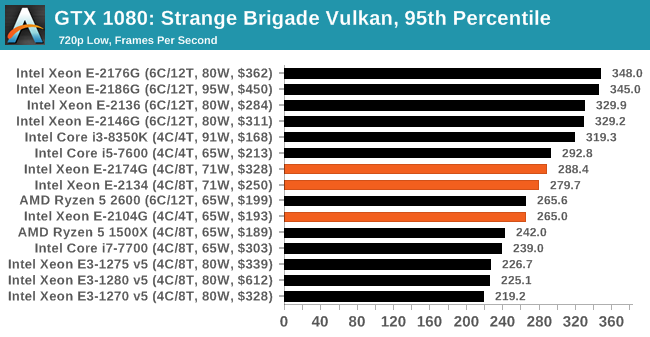

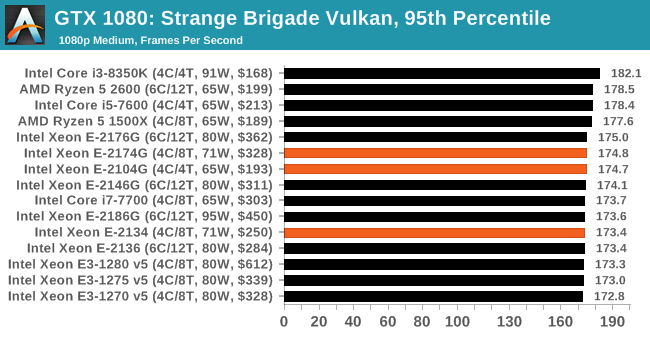

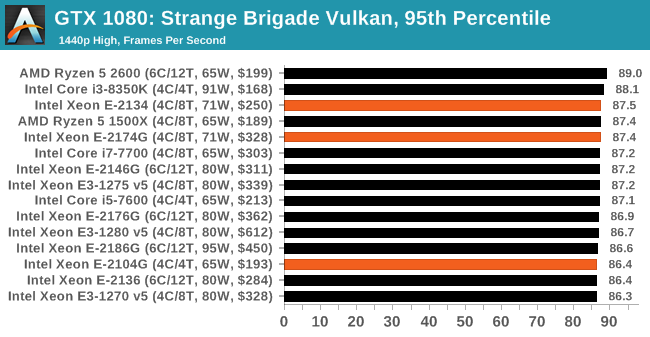

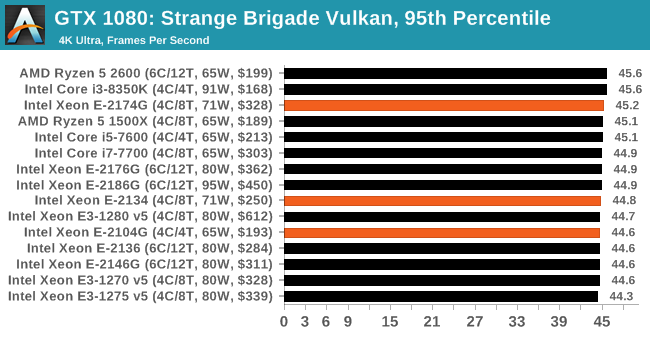

Gaming: Strange Brigade (DX12, Vulkan)

Strange Brigade is based in 1903’s Egypt and follows a story which is very similar to that of the Mummy film franchise. This particular third-person shooter is developed by Rebellion Developments which is more widely known for games such as the Sniper Elite and Alien vs Predator series. The game follows the hunt for Seteki the Witch Queen who has arose once again and the only ‘troop’ who can ultimately stop her. Gameplay is cooperative centric with a wide variety of different levels and many puzzles which need solving by the British colonial Secret Service agents sent to put an end to her reign of barbaric and brutality.

The game supports both the DirectX 12 and Vulkan APIs and houses its own built-in benchmark which offers various options up for customization including textures, anti-aliasing, reflections, draw distance and even allows users to enable or disable motion blur, ambient occlusion and tessellation among others. AMD has boasted previously that Strange Brigade is part of its Vulkan API implementation offering scalability for AMD multi-graphics card configurations.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Strange Brigade* | FPS | Aug 2018 |

DX12 Vulkan |

720p Low |

1080p Medium |

1440p High |

4K Ultra |

|

| *Strange Brigade is run in DX12 and Vulkan modes | ||||||||

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

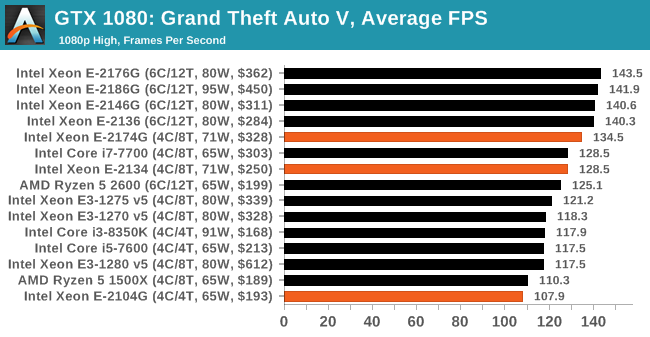

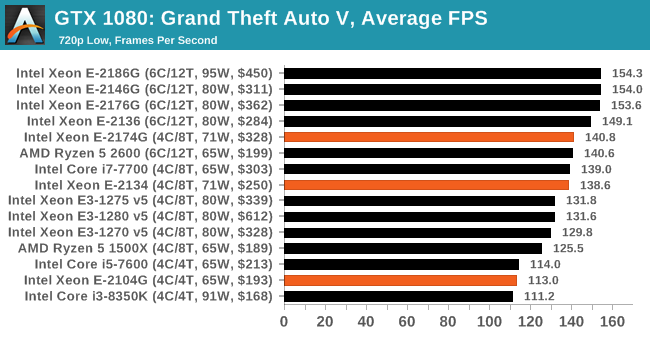

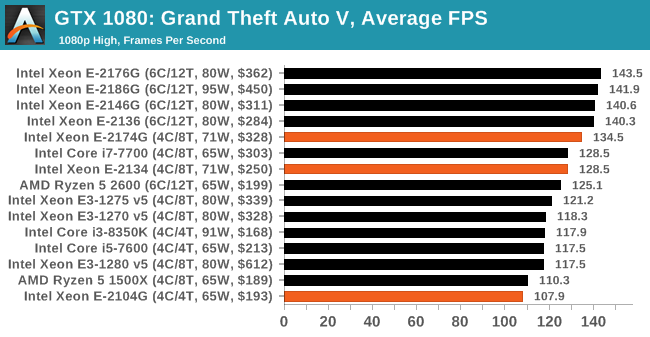

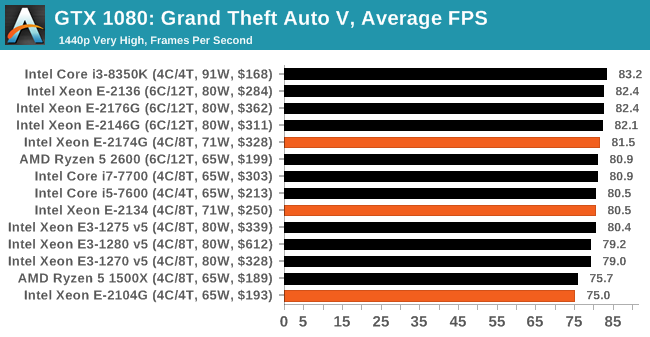

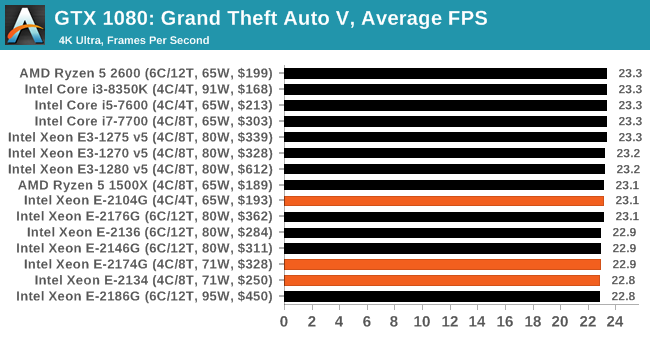

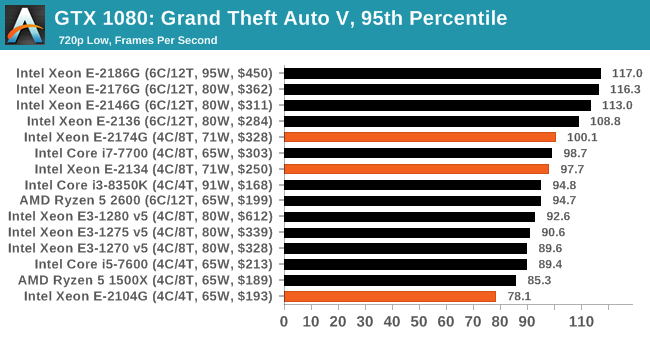

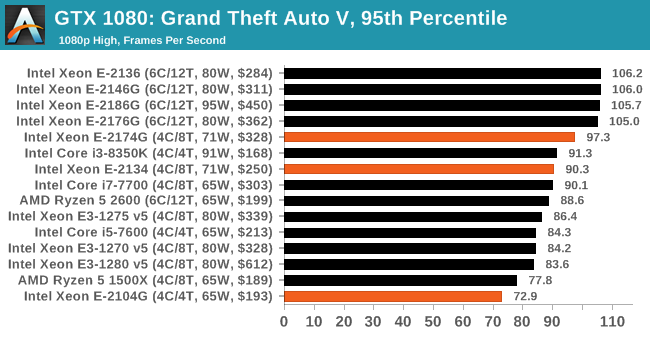

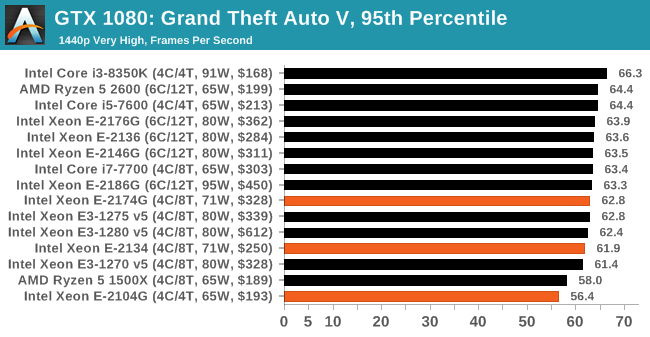

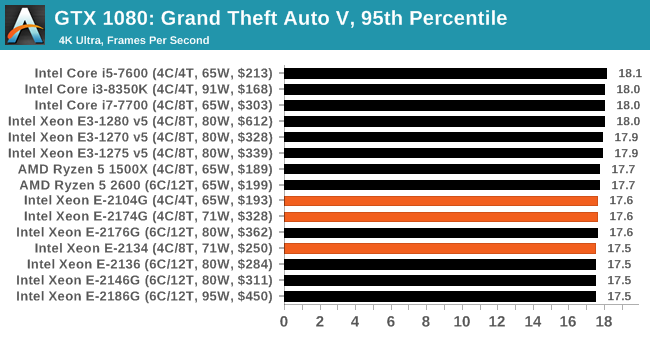

Gaming: Grand Theft Auto V

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Grand Theft Auto V | Open World | Apr 2015 |

DX11 | 720p Low |

1080p High |

1440p Very High |

4K Ultra |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

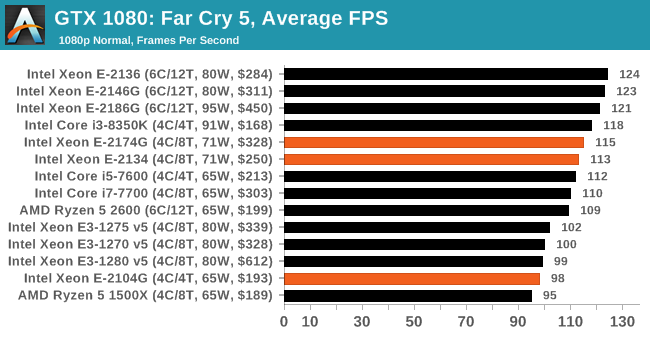

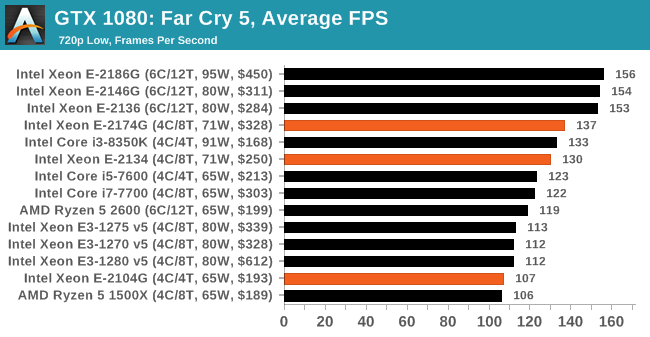

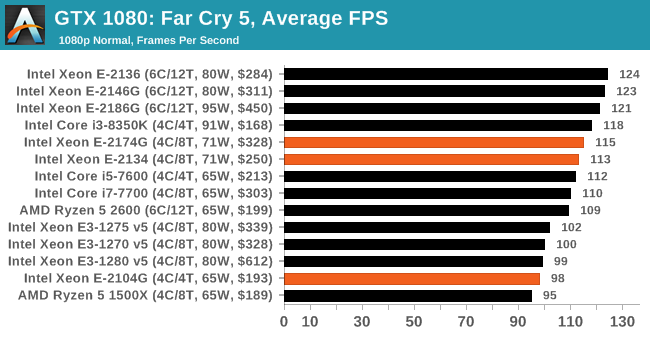

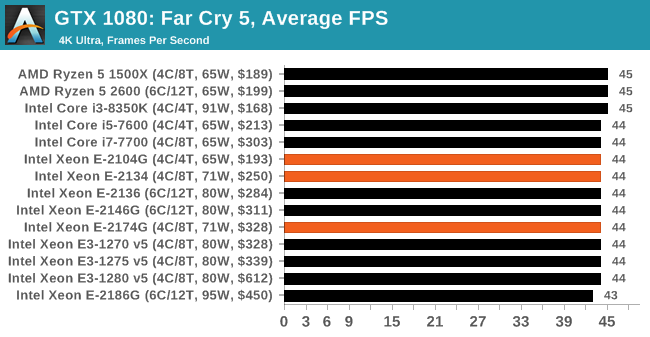

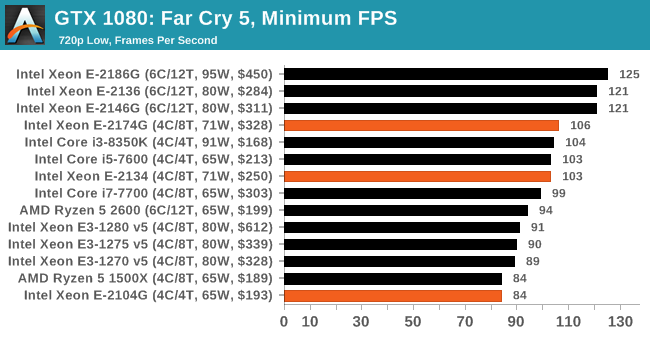

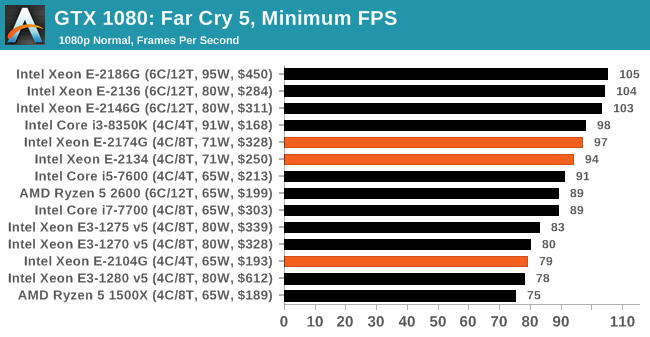

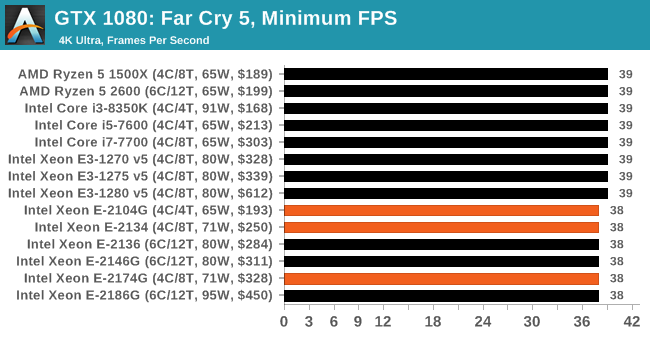

Gaming: Far Cry 5

The latest title in Ubisoft's Far Cry series lands us right into the unwelcoming arms of an armed militant cult in Montana, one of the many middles-of-nowhere in the United States. With a charismatic and enigmatic adversary, gorgeous landscapes of the northwestern American flavor, and lots of violence, it is classic Far Cry fare. Graphically intensive in an open-world environment, the game mixes in action and exploration.

Far Cry 5 does support Vega-centric features with Rapid Packed Math and Shader Intrinsics. Far Cry 5 also supports HDR (HDR10, scRGB, and FreeSync 2). We use the in-game benchmark for our data, and report the average/minimum frame rates.

| AnandTech CPU Gaming 2019 Game List | |||||||

| Game | Genre | Release Date | API | IGP | Low | High | |

| Far Cry 5 | FPS | Mar 2018 |

DX11 | 720p Low |

1080p Normal |

4K Ultra |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | High |

| Average FPS |  |

|

|

| 95th Percentile |  |

|

|

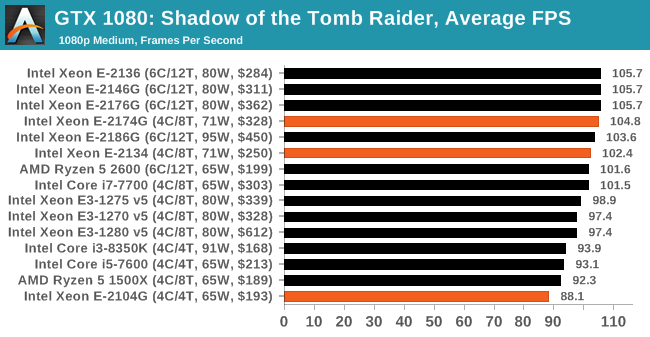

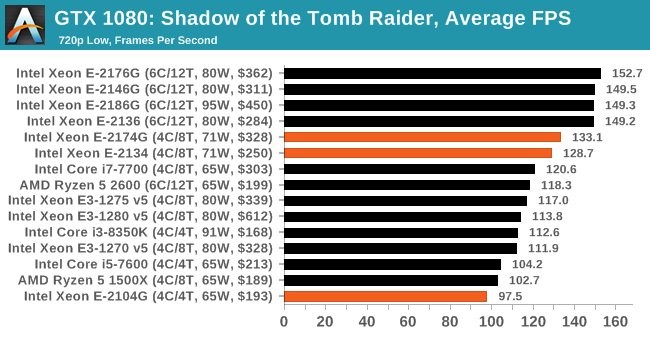

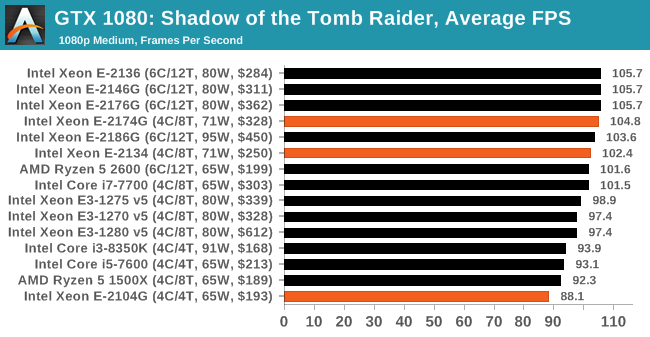

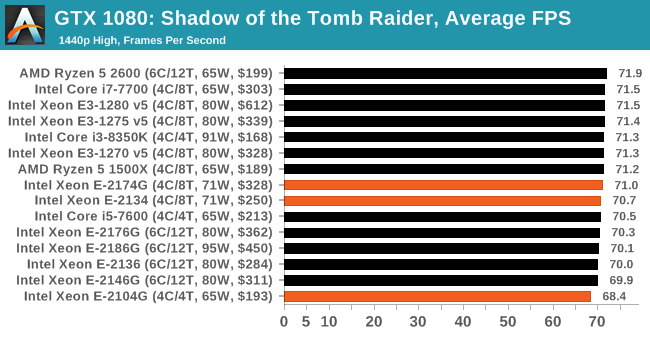

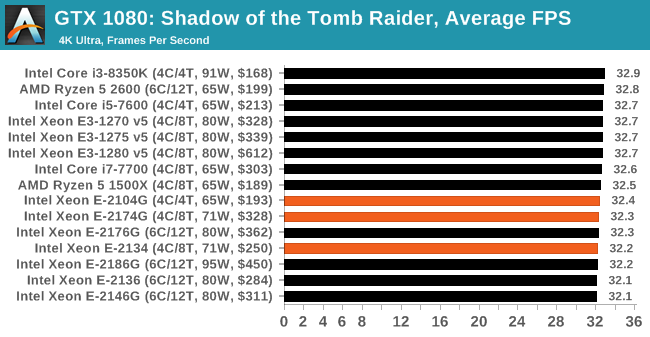

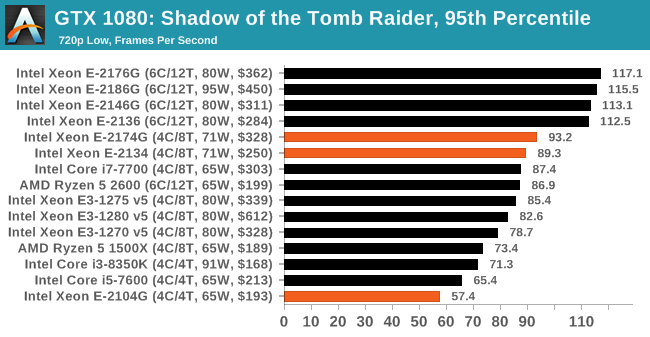

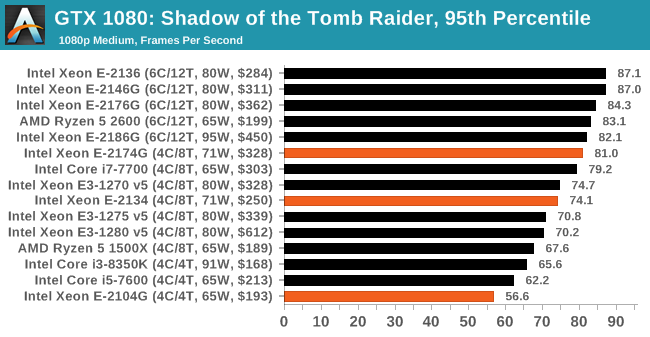

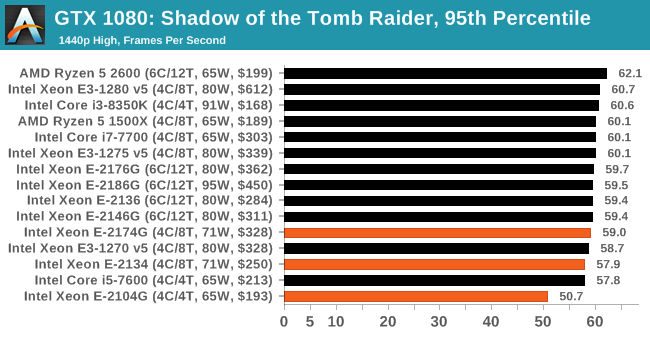

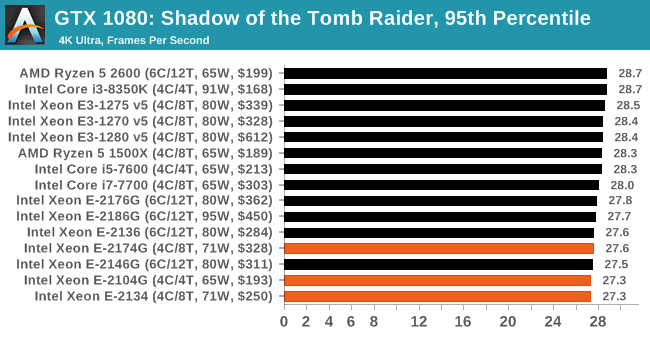

Gaming: Shadow of the Tomb Raider (DX12)

The latest instalment of the Tomb Raider franchise does less rising and lurks more in the shadows with Shadow of the Tomb Raider. As expected this action-adventure follows Lara Croft which is the main protagonist of the franchise as she muscles through the Mesoamerican and South American regions looking to stop a Mayan apocalyptic she herself unleashed. Shadow of the Tomb Raider is the direct sequel to the previous Rise of the Tomb Raider and was developed by Eidos Montreal and Crystal Dynamics and was published by Square Enix which hit shelves across multiple platforms in September 2018. This title effectively closes the Lara Croft Origins story and has received critical acclaims upon its release.

The integrated Shadow of the Tomb Raider benchmark is similar to that of the previous game Rise of the Tomb Raider, which we have used in our previous benchmarking suite. The newer Shadow of the Tomb Raider uses DirectX 11 and 12, with this particular title being touted as having one of the best implementations of DirectX 12 of any game released so far.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Shadow of the Tomb Raider | Action | Sep 2018 |

DX12 | 720p Low |

1080p Medium |

1440p High |

4K Highest |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |

|

|

|

|

| 95th Percentile |

|

|

|

|

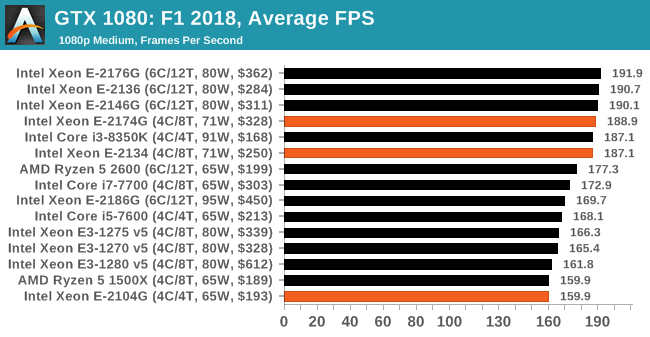

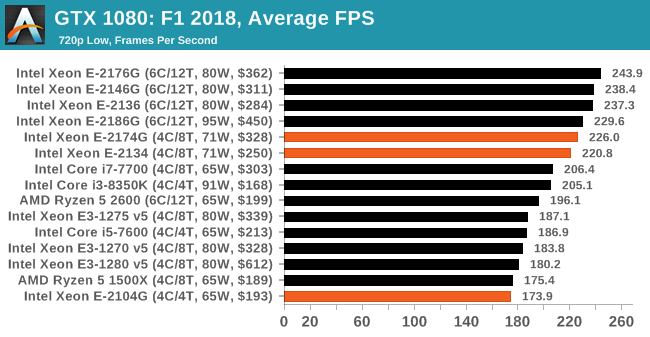

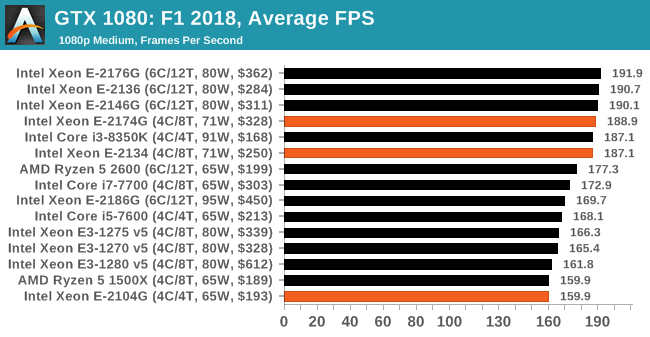

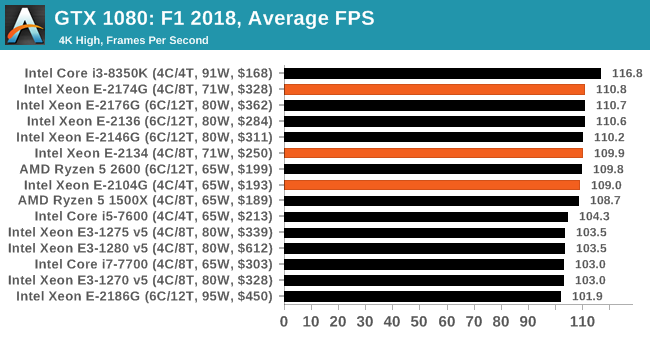

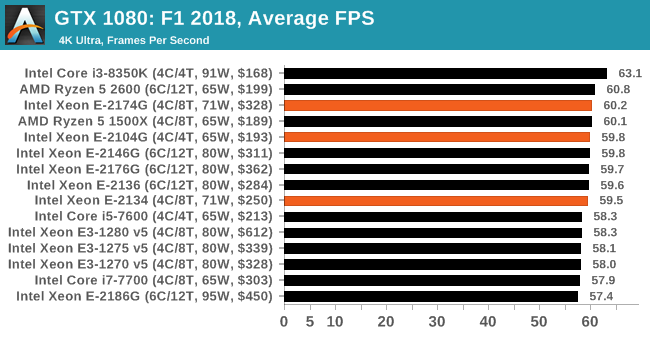

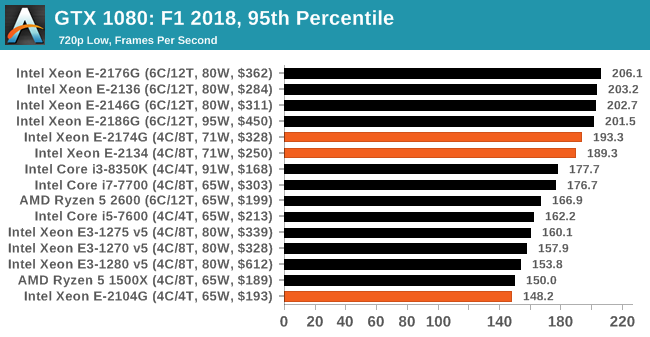

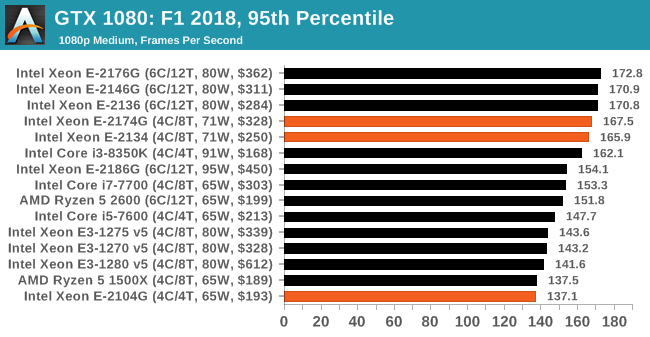

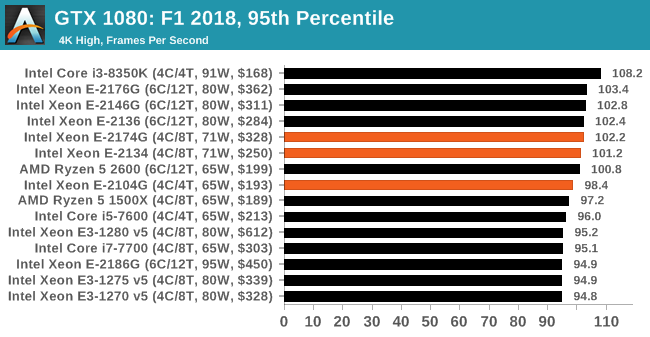

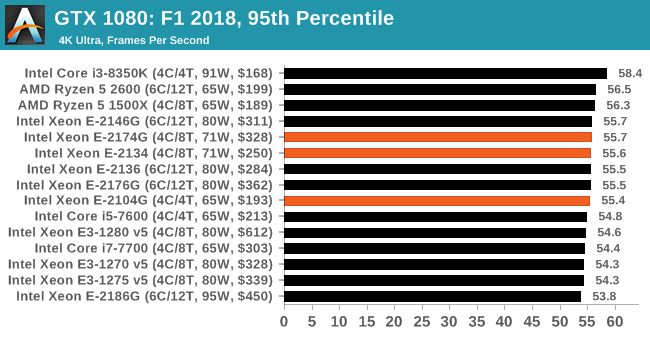

Gaming: F1 2018

Aside from keeping up-to-date on the Formula One world, F1 2017 added HDR support, which F1 2018 has maintained; otherwise, we should see any newer versions of Codemasters' EGO engine find its way into F1. Graphically demanding in its own right, F1 2018 keeps a useful racing-type graphics workload in our benchmarks.

We use the in-game benchmark, set to run on the Montreal track in the wet, driving as Lewis Hamilton from last place on the grid. Data is taken over a one-lap race.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| F1 2018 | Racing | Aug 2018 |

DX11 | 720p Low |

1080p Med |

4K High |

4K Ultra |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

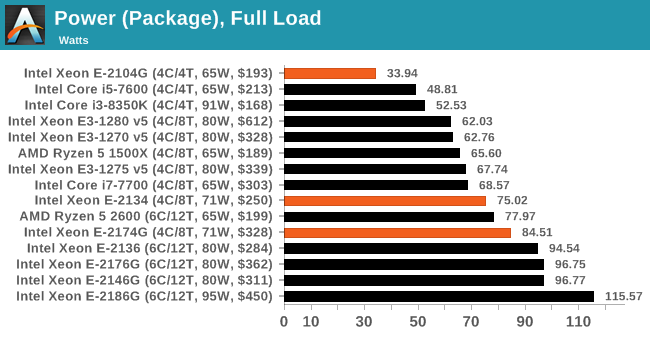

Power Consumption

Users who normally deal with consumer processors will likely know that consumer processors in consumer motherboards, especially for Intel, can consume a lot more power than their TDP rating. This is because Thermal Design Power (TDP) is not strictly a measure of power consumption, but of cooling performance required. Both Intel and AMD treat the TDP rating differently - for AMD is relates to peak performance when all cores are loaded, but for Intel it becomes related to the long-term steady state performance.

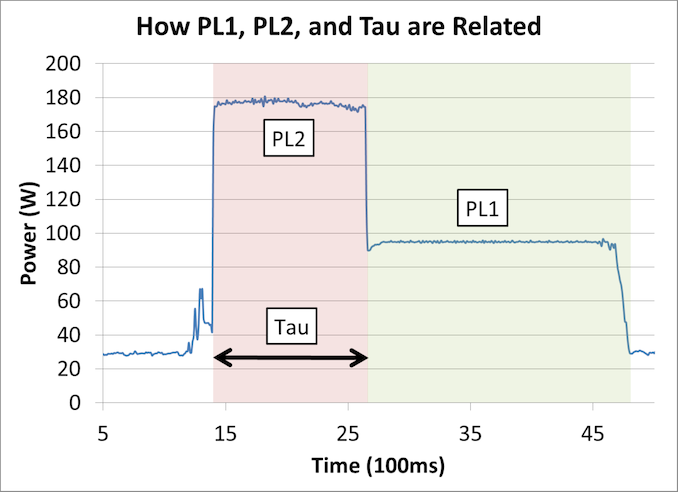

Intel defines several power levels (PL) for its processors, but the key ones are PL1 and PL2: the power level for sustained performance and the power level for short term turbo performance respectively. Normally PL1 is equal to the TDP, and PL2 is set to 1.25x the PL1. A CPU will also have a time parameter defined, telling the system how long PL2 is sustained before PL1 kicks in. It looks like this:

I wrote an article going deep into this issue, and it is worth reading for anyone interested in how turbo, performance, and power are related. Here is the link:

https://www.anandtech.com/show/13544/why-intel-processors-draw-more-power-than-expected-tdp-turbo

So these values, PL1, PL2, and the time variable, are all configurable by the motherboard manufacturer. Whatever Intel states as the recommended value can be overridden, and motherboard manufacturers often exchange better sustained frequencies for more power if the board is designed above Intel’s base specifications.

This matters when looking at consumer and commercial productions. Consumer hardware, such as the Core i3, i5, i7, and i9 processors, when paired with a consumer motherboard, often ignore PL1 and run at PL2 levels of performance all day. This enables users of those motherboards to perform long tasks quicker, because the motherboard manufacturer has designed for it.

For commercial systems, such as Xeon E, we find that motherboard manufacturers prefer to adhere to the power profiles rigidly. In this market, Intel guarantees performance with defined parameters, and this market typically insists on staying within those specifications. This means that for two processors equal in specifications but one is consumer and one is commercial, the commercial one will be lower power but perform worse in long tests. This is by and large what we have seen in our benchmarks, and our power testing.

For short intervals, for the parts that go anywhere near any power limits, these Xeon E processors go up to their PL2 values, up to 100W, but then fall back to TDP values. However, the E-2104G does not have hyperthreading, so doesn't actually ever go near those PL1 or PL2 values, making it a very efficient part.

Quad Core Xeon-E Conclusion

In this review, we focused on the results from our quad core Xeon E testing. These processors form part of Intel's portfolio that caters to small and medium businesses that want managed office systems with reasonable performance, or for entry level SOHO servers or storage platforms. In this market, Intel is competing against AMD's Ryzen Pro line of CPUs.

The processors we tested for this review are Intel's first generation of Xeon Entry chips, which would have been the E3-1200 v7 family if it were not for the recent name change across Intel's processor lines. This hardware is based on Intel's Coffee Lake microarchitecture, the latest generation of Intel's 14nm family of processors, and Intel offers a variety of quad and six core parts, some with integrated graphics and some without.

By and large, we weren't expecting to see anything crazily radical with the E-2174G, the E-2134, and the E-2104G that we tested for this review. The results are run of the mill, and the E-2174G sits at the top of the other quad cores in all tests as we expected it to.

The E-2104G is perhaps the odd one out here, as it doesn't form part of Intel's regular Xeon E product family. This processor is classified as 'off-roadmap', which means it wasn't part of Intel's original plan and it was built for specific customers with requests for specific performance levels or to hit a certain price point. These chips rarely find their way to retail, and being the lowest processor of the Xeon E quad core line, it certainly performs as such.

Users who need Xeon E parts are typically looking for prebuilt machines with mainstream levels of performance but have requirements for manageability and/or ECC memory. Without any of those requirements, Intel's consumer processor line offers more system performance and higher specs for the same system price. AMD's Ryzen Pro line are an alternative to Xeon E, offering manageability through the DASH interface, and scale from quad core to eight core. If we can get samples of the Ryzen Pro linen we'll test them as well to see how they stand up to Intel.

For users interested in the future of this processor line, that's a hard one to answer. We've seen hints that Intel might launch eight core versions of Xeon E, although we expected those in the market last year and they haven't materialised. We expect Intel to move to 10nm for its next gen processor line, however the earliest those will be ready seems to be 2020, and the mainstream Xeon E processors typically come out six to nine months after the consumer versions do, so we might be looking at the end of 2020 before the next generation of Xeon E are released (unless Intel surprises us in late 2019 with another generation on 14nm)

|

We also reviewed the Xeon Entry six-core processors: Intel Xeon E Six-Core Review: |